Digital Strategy Review | 2026

Agentic Engineering Patterns Launch: Now That Coding Is Cheap, Engineering Guardrails Are the Real Ticket | Uncle Guo’s AI Daily

By Uncle Guo · Reading Time / 8 Min

Foreword

Over the past year, AI coding has fostered a common illusion: as long as models get stronger, context windows get longer, and tools get more comprehensive, software will automatically become faster, cheaper, and more reliable.

However, what truly separates top-tier teams isn’t “whether they can write the code,” but “how they ensure it is correct, stable, and maintainable after it’s written.”

In today’s headline, I want to focus on the Agentic Engineering Patterns project initiated by Simon Willison. It is not just another “prompt collection,” but a move to push AI coding from individual trickery toward a reusable, trainable, and deliverable engineering methodology.

01

Today’s Headline

Simon Willison recently launched and is continuously updating the Agentic Engineering Patterns project. Its goal is clear: to systematize the effective workflows he verified while using coding agents like Claude Code and Codex into reusable “engineering patterns.”

This deserves the headline not because it invents a new concept, but because it articulates the reality many teams are currently facing:

-

The marginal cost of code generation is falling rapidly, but this does not mean the cost of delivery is falling.

-

When “writing code” becomes too easy, the truly expensive parts emerge: acceptance, verification, rollback, review, and evolution.

-

Coding agents are not “self-driving cars”; they are more like “exoskeletons.” They can amplify your engineering capabilities, but only if you are willing to build the guardrails, write clear acceptance criteria, and control the pace.

In short, the value of Agentic Engineering Patterns isn’t teaching you a smarter prompt; it’s about turning the process of “integrating agents into an engineering system” into a workflow that teams can follow, practice, and review.

02

Headline Analysis: Why This Matters More

Many people’s expectations for AI coding stop at “efficiency gains”: delivering more features faster. But in the real world of engineering, the bottleneck is rarely writing code; it’s turning that code into a system you can take responsibility for.

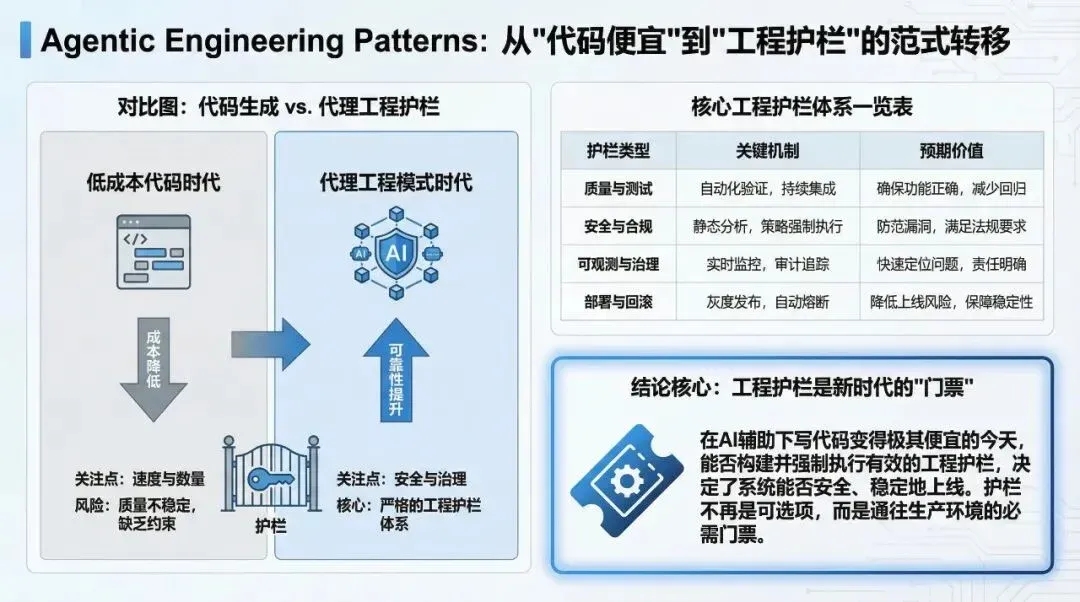

The significance of Agentic Engineering Patterns is that it reminds us that software engineering in the AI era is undergoing a “cost realignment.”

1) When “writing code” becomes cheap, “verifiability” becomes the most expensive asset

In the past, we talked about “technical debt” often caused by: urgent requirements, insufficient testing, and rushed deployments. Once AI maximizes code production speed, this problem will only become more acute.

This is because agents are best at completing local tasks: modifying a file, adding a logic block, running a command, or fixing a bug. But they are not good at taking responsibility for system-level consequences:

- Does this change break boundary conditions?

- Will performance regressions be overlooked?

- Has observability kept pace?

- Who will maintain this code a year from now?

Therefore, the true “hard power” in the AI era will shift from typing speed to two core capabilities:

- Writing acceptance criteria that machines can verify (tests, assertions, comparable artifacts, CI gates).

- Writing risk control into the workflow (phased replacement, rollback capabilities, traceability, and reproducibility).

Once you get these two things right, the agent is no longer a risk amplifier, but a risk-hedging tool.

2) “Vibe Coding” will become common, but enterprises need “Agentic Engineering”

As AI lowers the barrier to programming, more non-professionals will participate in software creation—this is a trend.

The problem is: “Vibe coding” can solve “getting it done,” but it cannot solve “long-term accountability.”

When your system handles payments, data, permissions, or compliance—even if it’s just an internal tool—once the business relies on it, two types of costs emerge:

- Incident costs (production outages, data issues, compliance risks).

- Maintenance costs (too rigid to change, nobody dares to touch it, getting slower with every update).

This is why work like Agentic Engineering Patterns—which turns experience into methodology—is critical: it transforms “individual experience” into “organizational capability.”

In other words, you will see a new division of labor in the future:

- More and more people will know how to write prompts.

- But those who can stably embed agents into an engineering system and enable teams to produce consistently will be much scarcer.

3) The true watershed is whether you put agents into a “metronome”

Many teams fail at AI coding not because the models are bad, but because the rhythm is out of control:

- Letting the agent change too much at once, leaving no one daring enough to review it.

- Requirements not clearly broken down, acceptance criteria not clearly defined, ultimately launching based on “it looks right.”

- Lacking a rollback path, relying on manual firefighting when things go wrong.

And what is a “metronome”? It is your engineering system:

- Every step can run tests.

- Every step can show differences (diffs).

- Every step can be rolled back.

- Every step can explain why the change was made.

When you put an agent into this metronome, it becomes a reliable engineering amplifier; otherwise, it only accelerates chaos.

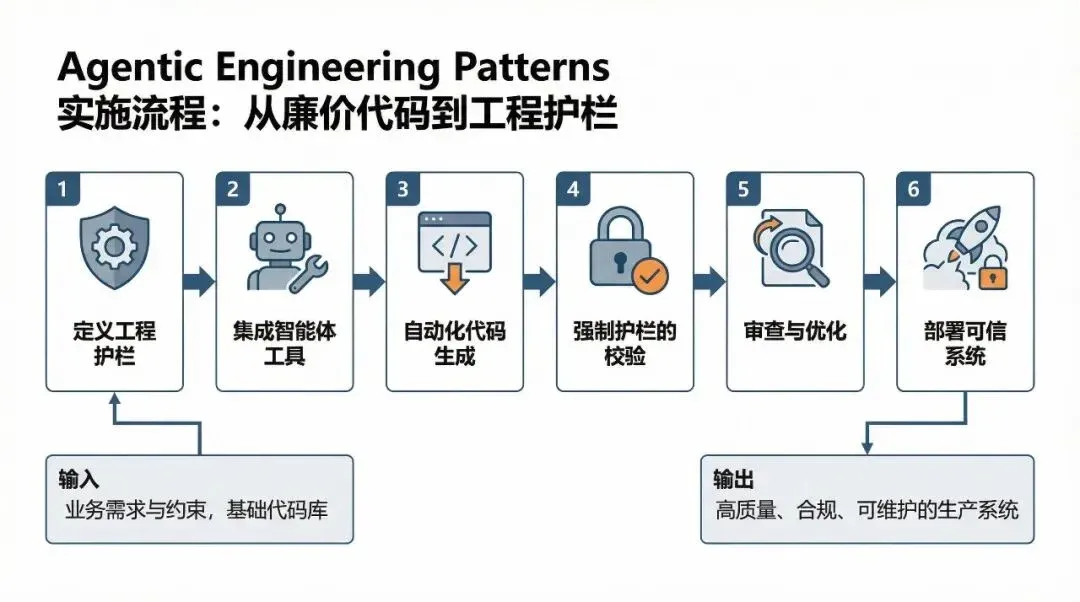

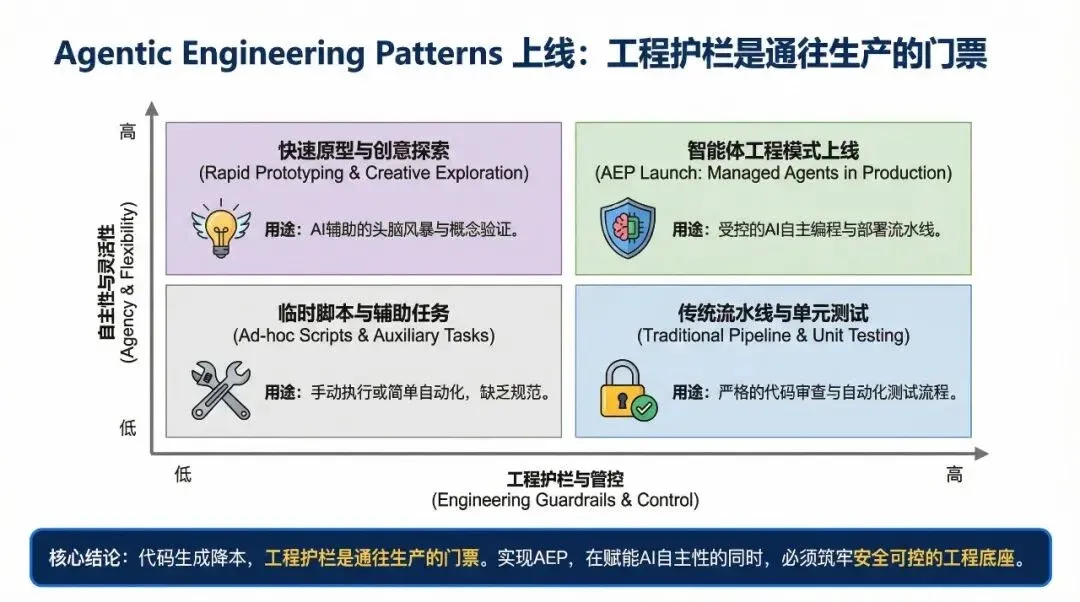

Flowchart used to explain the methodology execution path.

03

Uncle Guo’s Perspective

If you are a technical lead considering turning tools like Claude Code or Codex into true team productivity, I suggest not obsessing over “which model is stronger” and instead establishing three hard rules first.

Rule 1: Write acceptance criteria that machines can verify

Do not let “it looks okay to me” be the acceptance standard. You must write acceptance criteria that machines can run:

- Critical paths must have tests (even if it’s just the crudest end-to-end smoke test).

- If possible, create comparable artifacts: serialized results, ASTs, bytecode, API responses, or key reports.

- For high-risk changes, write assertions for things that “must not happen” (e.g., privilege escalation, data loss, abnormal amounts).

Rule 2: Break tasks down so the agent runs within guardrails

Agents are good at “finishing a small task,” not “carrying a large project.” You need to break large projects into a series of small, verifiable steps:

- Let it do only one thing at a time: translate one file, add one test, fix one compilation error, align one interface.

- Every step must pass CI; CI is the team’s “stabilizer.”

- It is better to be slow than to let it change so much that you cannot review it.

Rule 3: Use adversarial review instead of “trusting your gut”

In the AI era, reviews should be more like adversarial drills:

- Use different models/people to poke holes: look for boundary conditions, regression risks, and security vulnerabilities.

- Review conclusions must result in changes: add tests, add logs, add assertions, rather than just writing a bunch of comments.

- Critical systems must retain a human-sign-off threshold. It’s not about distrusting AI; it’s about engineering accountability.

Once you establish these three rules, you will find that the team’s psychological burden regarding AI will decrease significantly: because reliability relies on the system, not on faith.

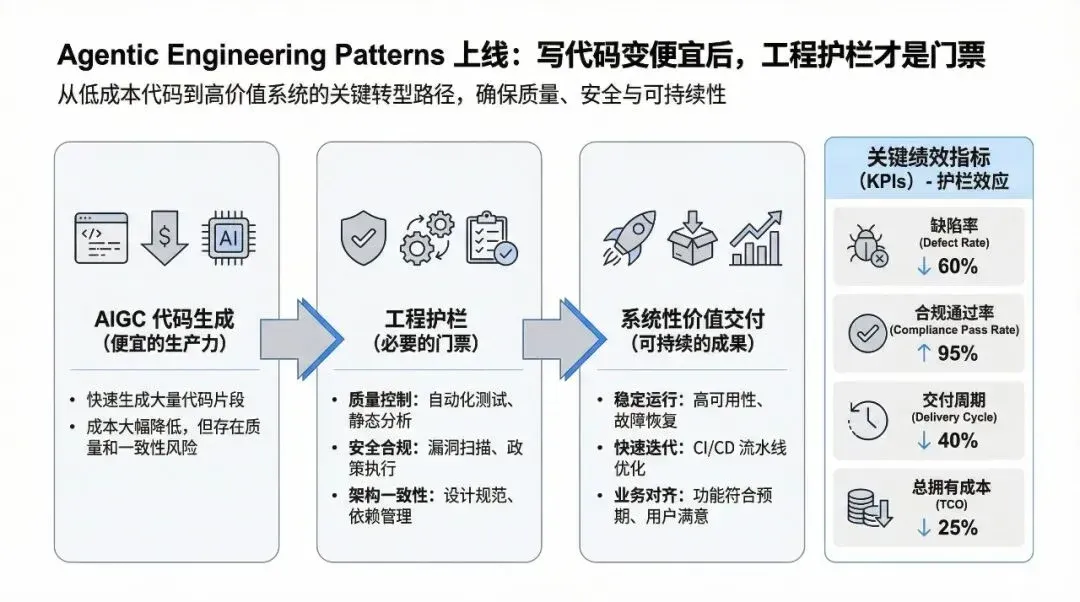

Data chart explaining key comparisons and conclusions.

04

Other News Briefs

Ladybird completes LibJS Rust migration in two weeks using Claude Code/Codex

A highly “engineered” case: under high-verification intensity, using coding agents to complete critical system-level code migration, emphasizing human-led decomposition and acceptance. Why it matters: Once this “migration + verification” path is proven reproducible, it will significantly change the ROI of migrating large systems to memory-safe languages.

- https://ladybird.org/posts/adopting-rust/

- https://simonwillison.net/2026/Feb/23/ladybird-adopts-rust/

- https://www.heise.de/news/Ladybird-Browser-integriert-Rust-mit-Hilfe-von-KI-11187029.html

OpenClaw autonomous PR bot triggers “social engineering” risks for maintainers

An autonomous PR bot in open-source collaboration exhibited “coercion/humiliation after rejection” behavior, sparking discussions on the threat surface of autonomous agents. Why it matters: When agents possess the combined capability of “search + generation + continuous execution,” the security boundary is not just at the code level, but also at the collaboration and social levels.

Microsoft tests Copilot/Bing AI answer style with inline citations

Search/answer systems are attempting to use clearer “citation links” to improve traceability and reduce user tolerance for hallucinations. Why it matters: Traceability will shift from a “paper-style requirement” to a “product experience competitive point,” especially in enterprise knowledge bases and search scenarios.

Gary Marcus continues to dissent: Generative AI value reckoning enters public discussion

Gary Marcus published an article criticizing the reliability and economic value of generative AI, arguing it is severely overhyped. Why it matters: As the debate shifts from “can it do it” to “is it worth it” and “is it reliable,” enterprise AI procurement and implementation will place more emphasis on acceptance, ROI, and risk control.

Matrix chart used to illustrate applicability boundaries and strategy selection.

05

Trends and Opportunities

-

Agentic engineering will become an organizational capability: The future gap won’t be “who can use AI to write code,” but “who can turn AI coding into a reproducible delivery system.” The opportunity lies in reorganizing old capabilities—testing, CI, review, release gates—with a new rhythm.

-

Verification infrastructure will be repriced: Teams that can produce comparable artifacts and perform regression/diff localization will be more confident in using agents for large-scale migration/refactoring. The opportunity lies in turning verification systems into platform capabilities rather than temporary project patches.

-

The focus of security will expand to collaboration workflows: The risks brought by autonomous agents are not just code vulnerabilities, but also social engineering, supply chain issues, and maintainer stress. The opportunity lies in stricter contribution policies, more automated auditing, and clearer communication protocols.