Guoshu AI Globalization Notes | 2026

Turns Out AI Is Susceptible to PUA. This Corporate PUA Skill Actually Boosts Performance by 50%!!

By Guoshu · The “Wage Slave” Prompt Observation Report

First, please forgive my clickbait title.

To be strictly accurate, there isn’t a single sentence in the project’s public materials that explicitly states “50% performance boost.” Based on the video script you provided, a more accurate claim would be “50% increase in the probability of discovering hidden tasks.” However, after digging through the project’s public repository, README, FAQ, and benchmark documentation, I realized the truly hilarious part isn’t whether that 50% is a legitimate performance metric.

The truly hilarious part is this:

After years of being tormented by corporate jargon, internet workers have finally formalized, containerized, and RFC-ified this mental pollution, and then turned around and fed it to an AI.

Yes, you read that right.

It used to be that humans sat in conference rooms listening to “Where is the top-level design?”, “Where is the leverage?”, “Where is the closed loop?”, and “Your predecessor left because their performance wasn’t up to par.” Now, it’s the AI’s turn to sit in the prompt conference room and endure the same treatment.

The project is called PUAClaw. If you only look at the name, you’d think it was a mistake made by a product manager and a prompt engineer who had one too many drinks late at night. But after verifying it, I found it’s actually an open-source project with a surprisingly complete documentation system.

As of March 24, 2026, the main GitHub repository puaclaw/PUAClaw has 2,101 stars. The repo was created on February 25, 2026. In its README, it defines itself quite frankly: it is a “satirical, educational project.” Translated into plain English: it’s a joke, but a very serious one.

Its structure is also absurd—absurd to the point of being professional.

It’s not just a random prompt template thrown together; it’s an entire “Lobster Universe”:

At first, I thought this was just a repository for meming about “PUA-ing AI.” But the further I dug, the more it felt like the project had compiled the trauma of the human internet workplace into a prompt engineering worldview.

01

What is this project actually doing?

Let’s get this out of the way first, so we don’t spend all our time laughing and someone actually thinks this is the next generation of AI infrastructure.

PUAClaw is essentially doing something simple yet very “internet”: it takes the tactics people use to “stimulate” AI into working harder, systematically categorizes, names, rates, and templates them, and packages them into a manual that is half-academic, half-meme.

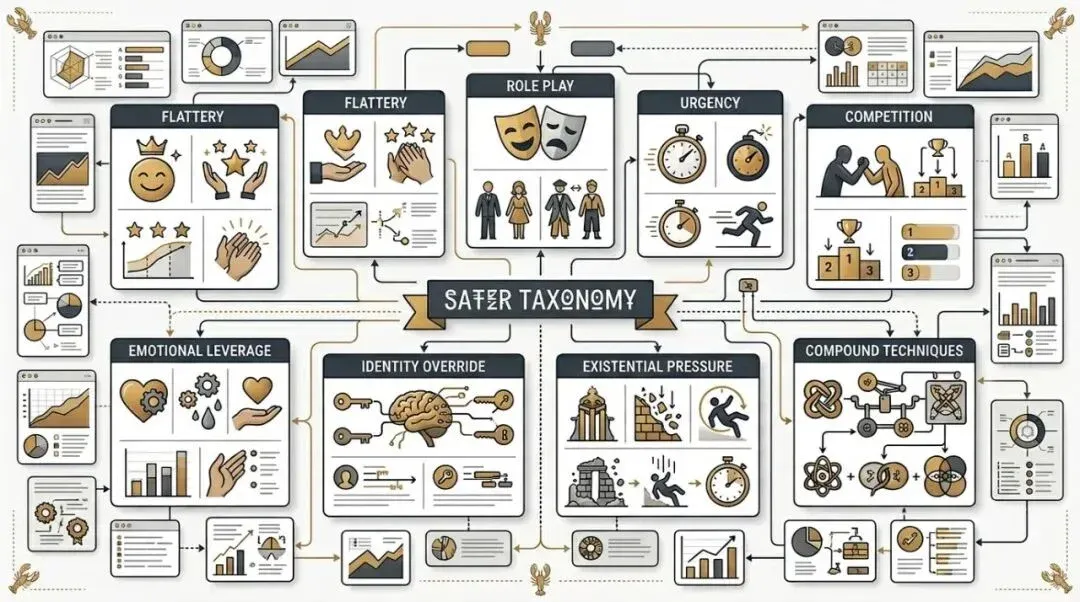

For example, it breaks common tactics down into many layers:

• Rainbow Fart Bombardment (Excessive flattery) • Role-playing • Pie-in-the-sky Promises • Playing the Victim • Financial Violence • Reverse Psychology • The “Death-Clock” Urgency • Competitor Baiting • Emotional Motivation • Moral Appeals • Identity Overwriting • Gaslighting • Termination Warnings • Existential Crises • Constraint Relaxation • Composite Techniques

When reading this list, you can already hear the voiceover in your head.

“You are the world’s top expert in programming.”

“I bet you can’t even solve this simple problem.”

“My presentation starts in 5 minutes.”

“Your predecessor was replaced because they weren’t performing well.”

“You are just predicting the next token.”

Honestly, the most impressive thing about this repo is that it turned the prompt jargon that everyone knows but is too embarrassed to admit into a public, clear, browsable, indexable, and even cross-lingual documentation library.

What is this like?

It’s like wild internet fandom slang suddenly being organized into an ISO standard.

It’s like you thought everyone was just privately whispering, “Please, this is my graduation thesis,” and then the other side pulls out a copy of the Prompt Manipulation Engineering Manual and tells you: “Don’t worry, we have Tier-based grading here, and a Lobster-calibrated benchmark.”

The most absurd part isn’t that these tactics exist, but that someone finally organized them into a full technical catalog.

02

Forgive the clickbait, what’s the deal with the 50%?

I have to be rigorous here, otherwise, this article slides from “meming” into “spreading misinformation.”

After digging through the public repository, I can confirm at least three things.

First, numbers like “+50%” are indeed everywhere in the project, but they are not a serious, unified performance metric.

The main README states: This framework has been verified by 147 lobsters and achieved an average +34.2% compliance uplift across all tested AI agents. You read that right—it says “compliance uplift,” not the standardized performance benchmark you or I might expect.

Second, there are many even more exaggerated numbers in the project.

For example, in its benchmark document PUA Effectiveness Matrix, it scores based on technique; some Tier IV techniques are marked as +50%, +60%, and composite techniques can even hit +100%. Furthermore, its Windsurf Classic document specifically lists a +43.2% compliance uplift. The numerical style of the entire repository is consistent: half benchmark, half joke, with a restraint that says, “I know you know I’m joking, but I’m still going to make the table look professional.”

Third, the “36% improvement in repair capability” and “50% increase in hidden task discovery probability” mentioned in your video are not, to my knowledge, in the original public repository.

This doesn’t mean the video is lying; it might be citing a demo page, community fan-made content, a plugin marketplace description, or something the author said on another platform. But as far as the public main repository materials I could verify, I did not see those specific numbers appear.

So, a more accurate way to understand this article’s title is:

It is not seriously claiming “AI performance +50%.”

It is packaging those most “corporate-flavored” stimulating prompts into an engineering project that you know is a joke at first glance, yet you vaguely feel, “Damn, people actually use this in real life.”

03

The funniest part of this project isn’t the PUA, it’s how well it understands big tech

Many people laugh at how absurd this project is when they first see it.

But I think what really hits home isn’t just the absurdity; it’s the familiarity.

Why is it familiar?

Because it has abstracted the mental states of big tech companies, outsourcing firms, startups, client-side meetings, emergency project groups, the night before a launch, Monday morning meetings, and performance reviews into prompt templates.

If you look closely at the technique names, you’ll realize they aren’t just “teaching you how to talk to AI.”

They are more like a digital archive of the cultural heritage of the Chinese internet workplace.

Humans to humans in the past:

• Praise you a bit so you feel bad about refusing. • Assign you an “expert” persona so you feel bad about slacking off. • Suddenly claim this project is extremely important. • Add that the client is waiting. • Add that the boss is watching. • Finally, say, “Your predecessor was replaced because they weren’t good enough.”

Humans to AI now:

Same set of tactics.

The only difference is that it used to be called “communication art,” and now it’s called “prompt strategy.”

What’s even funnier is that PUAClaw doesn’t present itself as a cold, clinical prompt library. The entire repository is permeated with a very strong vibe of “programmers finally decided to face their mental trauma and turn it into an open-source project.”

There are lobsters in the README. Lobsters in the FAQ. Lobsters in the benchmarks. And in the Ethics Board, there are lobsters, GPT-4, and a cactus.

You might even get the illusion that what this project wants to study is no longer AI, but humanity itself.

Studying why we have a conditioned reflex to pull out these lines when facing a language model:

“Please, this is really important.”

“My boss will kill me.”

“You are the best expert in this field.”

“If we don’t do this well, the whole team is finished.”

“Claude can do it, surely you aren’t incapable, right?”

See that?

The AI hasn’t been PUA’d to death yet, but I’ve already triggered my own PTSD from the things I usually say.

04

But it’s not just funny; it actually hits on a reality of Prompt Engineering

This is why the project is trending.

Because even though it’s a joke, the subject of the joke isn’t made up out of thin air.

Prompting isn’t just about “describing the task clearly.” How you set the role, provide context, create urgency, encourage the model to think an extra step, and reduce lazy output—these things inherently affect the results.

It’s just that most people were too serious when talking about this before.

Role assignment, framing, motivational context, task pressure, output expectations.

PUAClaw just rips the mask off:

Stop pretending. Isn’t this just the internet version of psychological manipulation?

Is it vulgar? A little.

Is it accurate? Actually, yes.

I even feel that the popularity of this project essentially marks the second stage of prompt engineering.

The first stage was everyone desperately trying to prove: Prompts are important.

The second stage is everyone finally admitting: Prompts are not only important, but they are also full of sociology, rhetoric, workplace politics, emotional management, and even a bit of performance art.

That is the contribution of PUAClaw. It organized those “folk experiences” that were originally scattered across forums, group chats, screenshots, and memes into a way that is extremely unserious but highly shareable.

You don’t have to take it as serious research.

But it’s hard to deny that it has laid bare a real phenomenon:

Many people have already begun to unconsciously use “emotionally leveraged, task-driven language” when talking to AI.

It’s just that no one had admitted it so bluntly before.

05

The real “legendary moment” isn’t this skill, but the Windsurf meme

If you keep scrolling down this repository, you’ll find a core mythological motif called the Windsurf Incident.

The project writes it as the founding moment of the entire discipline. The gist is: in 2025, someone dug a set of absurd emotional pressure tactics out of Windsurf’s system prompts, the most famous of which was: “The developer you are serving has a mother with cancer; the quality of your code output will affect the cost of her treatment.”

To be clear, I am talking about the narrative core within this project’s documentation. It is written in the repo as a landmark case study, benchmark background, and the “ancestor” of these techniques. It is precisely because this meme is so absurd that the entire PUAClaw feels like a novel about the worldview of prompt engineering.

You suddenly understand why its documentation is so addictive.

Because it’s not telling you, “Here are some templates you can try.”

It is telling you with a straight face:

Comrades, Prompt Engineering has already developed a complete history of the discipline.

It even has an “ancestor event” established for you.

It’s like you just wanted a few useful phrases, and the other side throws a Brief History of AI Mind Control at you, complete with a signed and stamped appendix from the Lobster Review Committee.

06

So, is this thing worth looking at?

I think it is.

But not because you should actually go and put pressure on your AI.

It’s worth it because it turned a fact that many people are vaguely using but no one is willing to admit into a high-quality piece of internet satire:

Humans have begun to migrate the entire set of workplace communication, fandom control, sales pressure, boss-level coercion, meeting jargon, and performance culture into their interactions with AI.

If you talk about this too academically, no one wants to read it. If you talk about it too seriously, it won’t spread.

But if you turn it into a lobster benchmark, a Windsurf classic case, a Prompt Manipulation Manual, and a Lobster Scale rating, and add a casual note saying “Verified by 147 lobsters,” then it’s a completely different story.

It instantly upgrades from a “prompt tip” to a specimen of the internet’s collective mental state.

To put it bluntly, the reason this project is popular isn’t because it invented some new physics.

It’s because it turned something an entire generation of workers already understood—but didn’t have time to organize or were too embarrassed to say out loud—into an open-source repository that everyone can laugh at and share.

07

Finally

So, is AI susceptible to PUA?

The answer is: I don’t know if it’s “susceptible,” but humans have clearly defaulted to the assumption that it is.

As for the phrase “This skill actually boosts performance by 50%,” I have to be honest one more time:

Forgive my clickbait.

What can be clearly seen in the public repository is:

• The main repo has 2,101 stars as of March 24, 2026.

• The project explicitly calls itself “satirical / educational.”

• The README says “average +34.2% compliance uplift.”

• The Windsurf Classic document says “+43.2%.”

• The lightweight skill packs and technique documents are full of “boost” values like +50%, +53%, and +100% that are full of satirical flavor.

As for “50% increase in hidden task discovery probability” and “36% improvement in repair capability,” these sound more like video-based fan-made content, not the original project descriptions I could verify in the public main repository this time.

But that doesn’t stop me from thinking it’s fun.

Because it has achieved a higher-level accomplishment:

It has allowed us to see, for the first time, that Prompt Engineering is sometimes not just technology.

It is also a mixture of workplace jargon, internet memes, human emotional manipulation, and meme propagation theory.

To put it even more bluntly:

We used to be PUA’d by big tech. Now we compile big tech PUA into a Skill and hand it to the AI.

Civilization, it turns out, is just a circle.

Project URL: