Digital Strategy Review | 2026

AI Is Starting to “See” Your Website: Why SEO Needs an AI Readability Checklist | Uncle Guo’s SEO Daily

By Uncle Guo · 8 Min Read

Preface

In the past, we assumed there were only two types of “readers” for our websites: humans and search engines.

Starting today, you must add a third reader to your mental model: AI bots. They crawl your site, compress your content into a summary, and directly influence the user’s first impression of your brand—often before the user even clicks through to your site.

In other words, your website must not only be “indexable” but also “correctly understandable by AI.” Once this becomes the standard, the priorities of technical SEO will be reordered: rendering methods, structured data, and content extractability will become the new traffic bottlenecks.

01

Today’s Top Headlines

Search Engine Journal published a (sponsored) article titled “What AI Sees When It Visits Your Website (And How To Fix It),” which thoroughly explains a shift currently underway: AI platforms don’t just provide you with a link; they “explain who you are” and then present that explanation to the user.

The article offers a practical, three-step approach:

-

01 Manually query the AI: Ask ChatGPT, Gemini, or Perplexity questions that your users would ask. See if your brand appears, where it appears, whether the tone is accurate, and whose information is being cited.

-

02 Conduct competitive benchmarking: Ask the AI to provide recommendations between you and your competitors. Observe which brands are “consistently mentioned” and which pages or third-party sources the AI cites.

-

03 Verify at the technical layer: Check server logs to see if AI crawlers are actually visiting. Distinguish between training, indexing, and retrieval bots. Check which pages they visit and which core pages are being ignored.

The article also highlights an often-overlooked reality: many modern websites are packed with JavaScript, lazy-loading, and carousels for the sake of user experience. While this may benefit humans, it is not necessarily friendly to machine visitors. Content that AI cannot see effectively does not exist in the AI world.

If you view this as an “AI visibility” gateway, you will discover a hidden risk: your site may be “rejecting” AI visitors without you even realizing it. The article notes that Cloudflare began blocking AI crawlers by default in 2025; such default policies can inadvertently turn many sites into “blind spots” in the AI landscape. Supplementary source: https://blog.cloudflare.com/cloudflare-will-block-ai-crawlers-by-default/

02

Headline Analysis: Why This Matters More

I prefer to interpret this news as the “visibility chain” of SEO being split into two segments.

The old chain was: Crawling -> Indexing -> Ranking -> Click -> Conversion. The new chain is: Crawling -> Understanding -> Summarizing -> (User forms an impression within the AI) -> Click/No Click -> Conversion.

This means the objects you need to optimize are no longer just ranking systems, but also the “quality of content extraction and retelling.” This will directly affect three things:

1) Site architecture is moving from “human-only” to “human-machine dual-stack”

Google Search Central’s JS SEO documentation has consistently stated one thing: Google handles JavaScript, but it’s not a free lunch. It relies on a full process of rendering, resource loading, and queue scheduling. Developers must build according to best practices.

Source (Google Search Central): https://developers.google.com/search/docs/crawling-indexing/javascript/javascript-seo-basics

More realistically: the “machine visitors” you face are not just Googlebot. Retrieval-based user agents on AI platforms often crawl in real-time; they may not perform full rendering or long-term indexing like Google, and they certainly won’t wait patiently for your frontend framework to finish hydrating.

Therefore, “being visible to a browser” does not equal “being stably extractable by a bot.” You must prepare a more stable content delivery method for machines: more direct HTML output, clearer structure, and key text that relies less on interaction.

2) Dynamic rendering as a “patch” is increasingly unsustainable

Google has explicitly labeled dynamic rendering as a workaround and suggests it is a temporary strategy. For brand sites, the more stable direction remains SSR (Server-Side Rendering) or pre-rendering, ensuring core content is delivered in the initial HTML.

Source (Google Search Central): https://developers.google.com/search/docs/crawling-indexing/javascript/dynamic-rendering

Think of it this way: to prevent AI from misinterpreting you, ensure the machine receives the “definitive original text” first.

3) If AEO/GEO focuses only on content, it will fail at accessibility

Many teams are starting to research AEO (Answer Engine Optimization), GEO (Generative Engine Optimization), and AIO (AI Optimization). These are fine, but it’s easy to fall into a trap: believing that as long as the content structure is correct, AI will cite you.

The reality is a more fundamental barrier: no matter how well the content is written, if the bot cannot enter, crawl, or parse it, the result is still zero.

Therefore, the value of today’s news is that it elevates “AI accessibility” above content strategy: first ensure AI can read it, then discuss whether AI will cite it.

Flowchart used to explain the methodological execution path.

03

Uncle Guo’s Perspective

I suggest treating “AI Readability” as a new site audit item, turning it into a repeatable, quantifiable checklist. Below is what I consider the version with the lowest cost and highest return.

1) Rendering Layer: “Rescue” key content from JS

Prioritize checking these three things:

- View Source for core text: If the source code only contains an app shell and the body text relies entirely on JS, the failure rate for machine visitors will increase significantly.

- Extractability of the first screen: Key definitions, product selling points, and core steps should appear on the first screen, avoiding triggers like scrolling, clicking, or lazy loading.

- Consistency across states: If the same URL returns vastly different content for different user agents, regions, or login states, it makes it easier for AI to “misinterpret” you.

2) Structural Layer: Let AI “guess less”

Think of AI summarization as a game of “telephone”: the more it has to guess, the more distorted the output becomes. The goal of the structural layer is to reduce guessing:

- Use clear H2/H3 hierarchies to organize arguments.

- Write FAQs directly using list or Q&A structures.

- Use tables for product parameters, use cases, and constraints.

- Add a “definition sentence” for key concepts to make extraction more stable.

3) Governance Layer: Manage bots like real visitors

At a minimum, you should:

- Identify mainstream AI crawlers (training, retrieval, indexing) in your logs.

- Have clear policies for

robots.txt, WAF, and rate limits, rather than “letting them pass by chance.” - Ensure default policies are explainable: who was blocked today, why, and what is the potential loss?

Especially for sites using infrastructure like Cloudflare, treat “default blocking” as a change risk. Don’t wait until traffic drops to react.

4) Monitoring Layer: Use “Prompt Monitoring” instead of “Ranking Only”

In the SEO era, we watched rankings. In the AI era, you must add a dimension: watch what AI says about you.

The simplest method is to run a fixed set of questions every week (category keywords, comparison keywords, decision keywords, after-sales keywords) and record:

- Whether you are mentioned.

- Who the cited source is.

- Whether the facts are accurate.

- Whether the tone is negative.

Treat this as “brand sentiment monitoring within AI,” and you will discover problems much earlier.

Data chart used to explain key comparisons and conclusions.

04

Other Key News Briefs

1) Anthropic Claude’s crawler system is clearer; webmasters need more precise robots.txt strategies

Discussions around the crawling strategies of the Claude bot series (training/retrieval, etc.) are heating up. Site operations are moving from “Should I let AI in?” to “Which AI should I let in, and for what purpose?” Source: https://www.searchenginejournal.com/anthropic-claude-bots-robots-txt-ai-decisions/568253/

2) Google Discover core update complete; content distribution enters a new recalculation period

Fluctuations in Discover have a massive impact on content sites. The completion of an update often means it’s time to re-evaluate topic structures, headline strategies, and visual asset quality. Source: https://www.searchenginejournal.com/googles-discover-core-update-finishes-rolling-out/568413/

3) Microsoft Advertising launches self-service negative keyword lists

For ad teams, this level of control directly impacts account structure and scaling management costs. Source: https://www.seroundtable.com/microsoft-advertising-negative-keyword-lists-41005.html

4) Google Ads pushes text guidelines in AI Max to more advertisers

Platform “unified copy standards” often mean that creative review and asset production processes must be adjusted accordingly. Source: https://www.seroundtable.com/google-ads-text-guidelines-global-40997.html

5) Google tests adding “Web mentions” to retailer store pages

Injecting off-site reviews (like Reddit) into merchant pages as “visible modules” will change the trust structure of local/e-commerce SERPs. Source: https://www.seroundtable.com/google-web-mentions-in-retailer-store-pages-40994.html

6) Bing tests 2x2 video grid layout; video SERP evolution continues

The evolution of SERP formats is essentially a “redistribution of attention.” Content teams must synchronize their video asset title and thumbnail strategies. Source: https://www.seroundtable.com/bing-video-grid-layout-40945.html

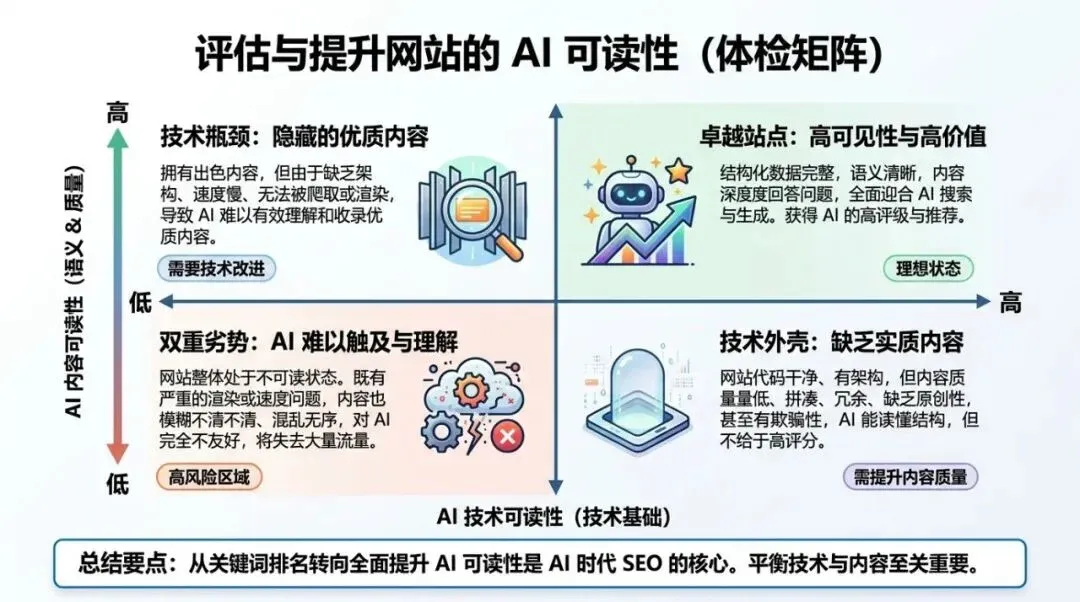

Matrix chart used to illustrate applicability boundaries and strategy selection.

05

Trends and Opportunities

1) AI readability will become a new category of “Technical Debt”

Previously, technical debt referred mostly to performance and maintainability. Now, there will be a new category: machine-readability technical debt. It’s not about pleasing search engines, but about ensuring AI visitors can stably access the original text.

2) The opportunity for AEO/GEO lies in “structured delivery,” not just “writing more”

If your content can be stably crawled, is structurally clear, and contains complete information, the probability of AI citing you is naturally higher. Conversely, the more fragmented, interaction-dependent, and “visual-heavy” your content is, the more likely it is to be distorted in the AI world.

3) New roles will emerge: From SEO to “AI Visibility Operations”

When the monitoring dimension expands from “rankings” to “what AI says about you,” operational actions will also expand: log analysis, bot governance, content structuring, and brand information consistency will be integrated into a more product-oriented workflow.