Digital Strategy Review | 2026

Claude Code Top 10 Highlights 03 | Fork Subagent and Prompt Cache: A Rare “Cost-Level Innovation”

By Uncle Guo · Reading Time / 8 Min

Preface

Many multi-agent designs focus solely on whether the functionality works, rarely stopping to ask: “If we fork frequently, will the costs collapse the system?” In this article, I want to clarify how Claude Code has turned delegation into an engineering path that is sustainable for the long term.

01

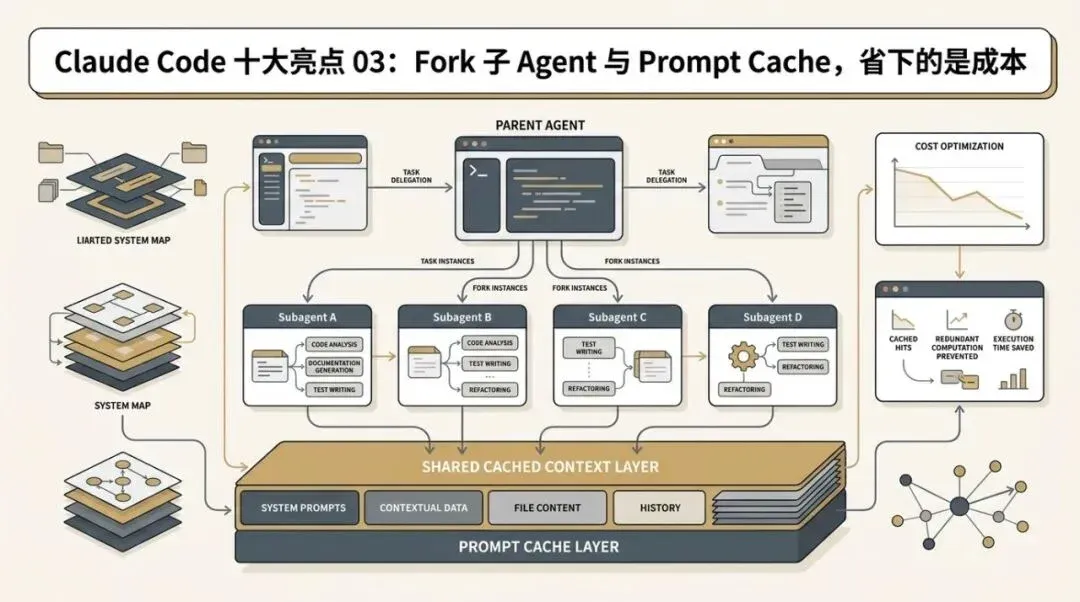

Fork Subagent and Prompt Cache: A Rare “Cost-Level Innovation”

When many teams build multi-agent systems, their focus often stops at:

• Can it fork? • Can it inherit context? • Can it run in the background?

Claude Code goes much further. It doesn’t just consider whether a forked sub-agent can work; it seriously considers whether the token costs after the fork will explode.

This is exactly what makes src/tools/AgentTool/forkSubagent.ts so brilliant.

02

I. Why Ordinary Forking Approaches Fall Short

The most naive implementation of a fork usually looks like this:

01 Copy the parent context. 02 Append a task description for the sub-agent. 03 Send a new model request.

While this works functionally, it faces a very practical problem:

Every forked child carries a massive, nearly identical prompt prefix, burning tokens repeatedly.

If the system forks frequently, costs will skyrocket when multiple sub-agents run concurrently.

This issue isn’t obvious during the demo phase, but once it enters high-frequency, real-world usage, it quickly becomes a platform-level burden.

Claude Code clearly noticed this.

What makes Claude Code truly impressive is that it designed delegation as a cache-friendly input construction.

03

II. Claude Code’s Goal: Not Just “Can Fork,” but “Fork with High Reuse”

The key design goal in forkSubagent.ts can be summarized in one sentence:

Make the request prefixes of forked children byte-for-byte identical to maximize prompt cache hits.

This is a highly advanced goal. It shows that the author isn’t just looking at forking from a product feature perspective, but from the perspective of inference infrastructure costs.

04

III. How It Actually Works

1. Don’t rebuild the parent context; reuse the original assistant message

The forked child retains the complete content of the parent’s assistant message, including:

• thinking

• text

• tool_use blocks

This means the system avoids “reorganizing” existing context, opting instead to reuse the existing structure.

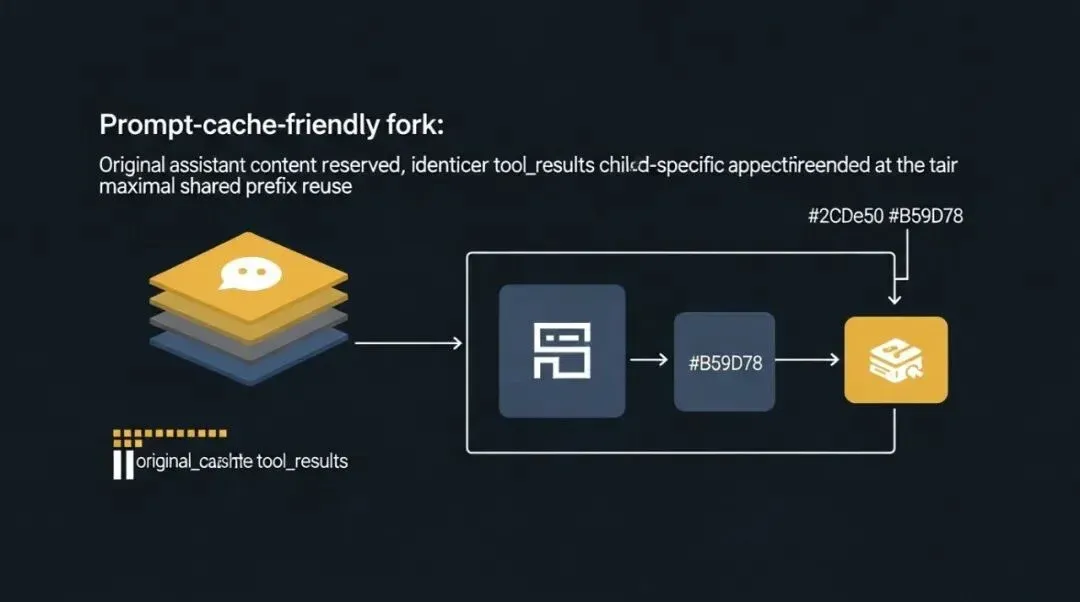

2. Generate a unified placeholder tool_result for all tool_use

This is the most critical stroke in the entire design.

Instead of generating different tool_results for each forked child, it uses a unified placeholder, such as “Fork started — processing in background.”

The effect of this is: • All sub-agents remain consistent across the large prefix. • Truly variable content is pushed to the very end.

3. Keep the actual changing directives at the end

While every forked child has a different task, Claude Code ensures that differences only appear in the final directive text.

This “stable prefix, changing suffix” construction is highly conducive to prompt cache reuse.

4. Reuse the system prompt bytes already rendered by the parent session

This point is particularly meticulous.

The system doesn’t simply call getSystemPrompt() again for the sub-agent, as that could be affected by runtime conditions, feature gates, or GrowthBook hot states, leading to discrepancies in the generated bytes.

Instead, Claude Code tries to thread the prompt bytes already rendered by the parent session.

This is a level of rigor rarely seen.

05

IV. Why This Matters So Much

1. It makes multi-agent systems more scalable

Many multi-agent systems are functional but not cost-sustainable. Especially when every sub-agent carries a large, nearly repetitive context, costs rise linearly.

Claude Code’s fork design is essentially “batch inference optimization” applied to multi-agents.

2. It makes forking a default strategy rather than an expensive privilege

If forking is expensive, the system will tend to minimize delegation. If forking can share a large amount of prompt cache, the runtime is more likely to treat delegation as a standard tool.

3. It reflects the engineering team’s deep understanding of LLM cost structures

This highlight best demonstrates that Claude Code is not just a “product that knows how to write prompts,” but a system that truly understands inference infrastructure.

06

V. How Is This Fundamentally Different from “Shared Context”?

When people hear about this design, their first reaction might be: “Isn’t that just sharing context?”

No, it isn’t.

Ordinary shared context only means: • The sub-agent can also see the parent context.

What Claude Code is doing is: • The sub-agent’s input construction is meticulously designed so that the caching layer treats them as having the same prefix as much as possible.

The former is functional sharing. The latter is cost engineering.

07

VI. Why This Design Is Difficult to Implement

Because you have to satisfy many conditions simultaneously:

• The sub-agent must still correctly understand the task. • The parent context must not be corrupted. • Tool call relationships must be valid. • Different children must be sufficiently similar. • Recursive forking must be avoided.

Claude Code even includes specific protections in forkSubagent.ts, such as detecting forked children to prevent further recursive forking.

This shows it isn’t just a “clever trick,” but a path turned into a long-term, usable feature.

08

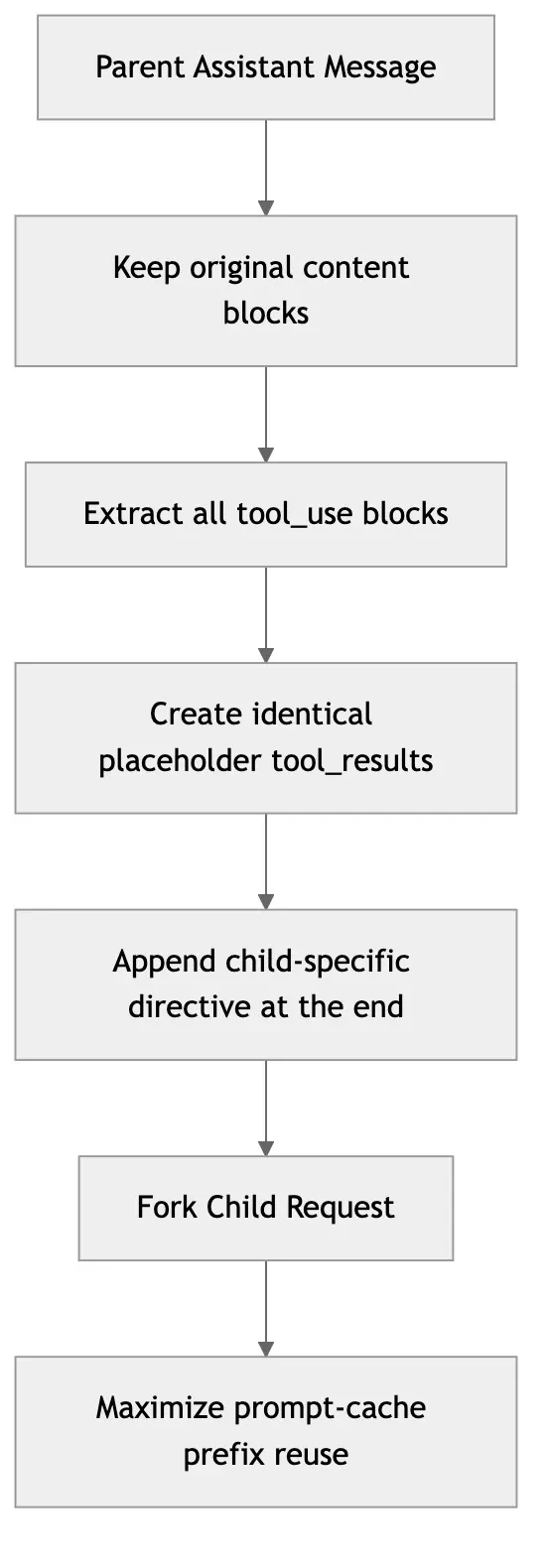

VII. Understanding the Highlight in One Diagram

09

VIII. Conclusion

The most commendable aspect of the fork subagent design is not that it makes sub-agents smarter, but that it makes them “cheaper.”

When agent systems are deployed in the real world, this type of cost-level innovation is often scarcer than feature-level innovation. Because the former requires the team to simultaneously understand:

• Model context structure • Prompt caching behavior • System prompt stability • Multi-agent scheduling patterns

Claude Code’s answer here is beautiful:

Don’t just let the agent clone itself; let the agent’s clones share the same brain prefix.