Digital Strategy Review | 2026

Claude Code Highlight 02 | Semantic Tool Concurrency: Why It’s Both Fast and Stable

By Uncle Fruit · Reading Time / 8 Min

Editor’s Note

This topic deserves a dedicated deep dive because many agents expose two major flaws as soon as they encounter real-world codebases: they are either too slow or too chaotic. Claude Code’s approach is remarkably mature; rather than blindly chasing concurrency, it integrates concurrency into the runtime semantics.

01

Semantic Tool Concurrency: Why It’s Both Fast and Stable

Once a multi-tool agent system enters a real-world environment, it faces a practical dilemma:

- Fully serial execution: Too slow.

- Fully concurrent execution: Too chaotic.

Claude Code’s answer to this problem is elegant: it doesn’t make arbitrary decisions about concurrency. Instead, it allows tools to declare their own concurrency semantics, and the runtime executes them in batches based on those semantics.

The core implementation can be found in:

src/services/tools/toolOrchestration.tssrc/tools.tssrc/query.ts

02

I. Where exactly is the problem?

In a coding scenario, a single round of reasoning often generates multiple tool calls, such as:

- Searching for files

- Searching for text

- Reading multiple files

- Querying MCP resources

- Editing files

- Running shell commands

The nature of these calls varies significantly.

Suitable for concurrency

GlobGrepFileRead- Certain read-only MCP tools

Not suitable for concurrency

- File editing

- Writing files

- Tools dependent on the latest context state

- Shell/PowerShell calls with side effects

If a system lacks an intermediate layer to understand these differences, it is forced to choose between two bad options:

01 All serial: Ensures safety but provides a poor user experience. 02 All concurrent: Pursues speed but risks state corruption.

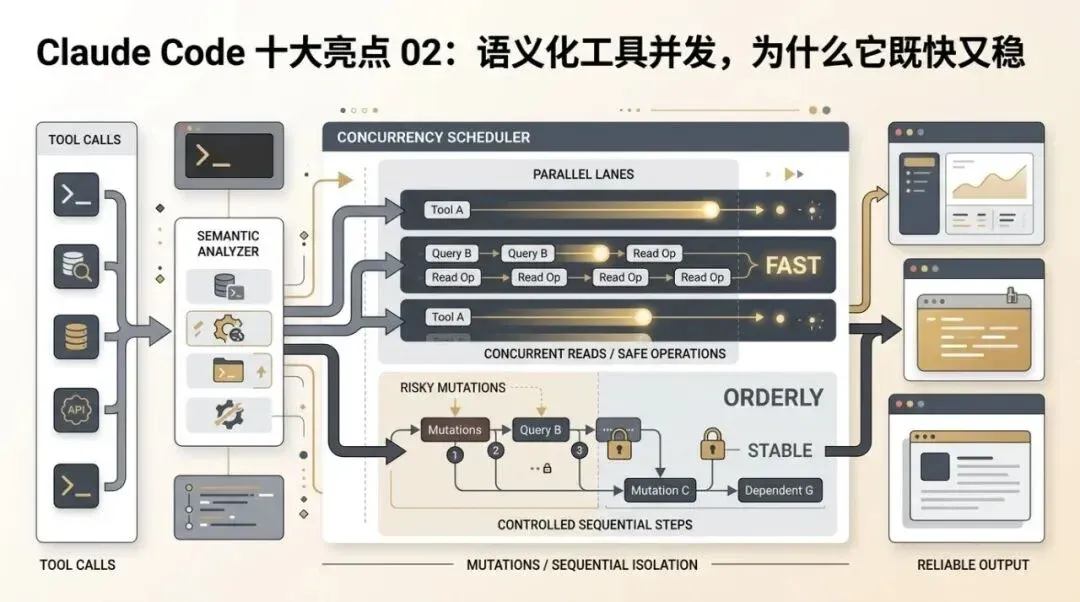

Claude Code has chosen a third path.

Concurrency is not the default state; it is only triggered after a tool declares its semantics, allowing the runtime to perform batch partitioning.

03

II. Claude Code’s Approach: Let Tools Express Their Own Concurrency Semantics

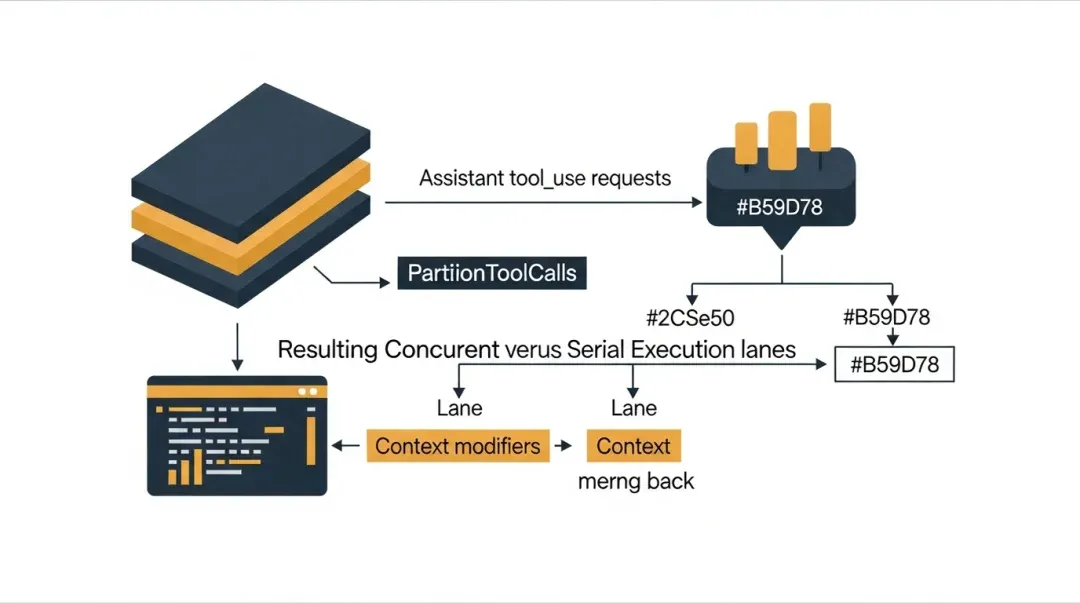

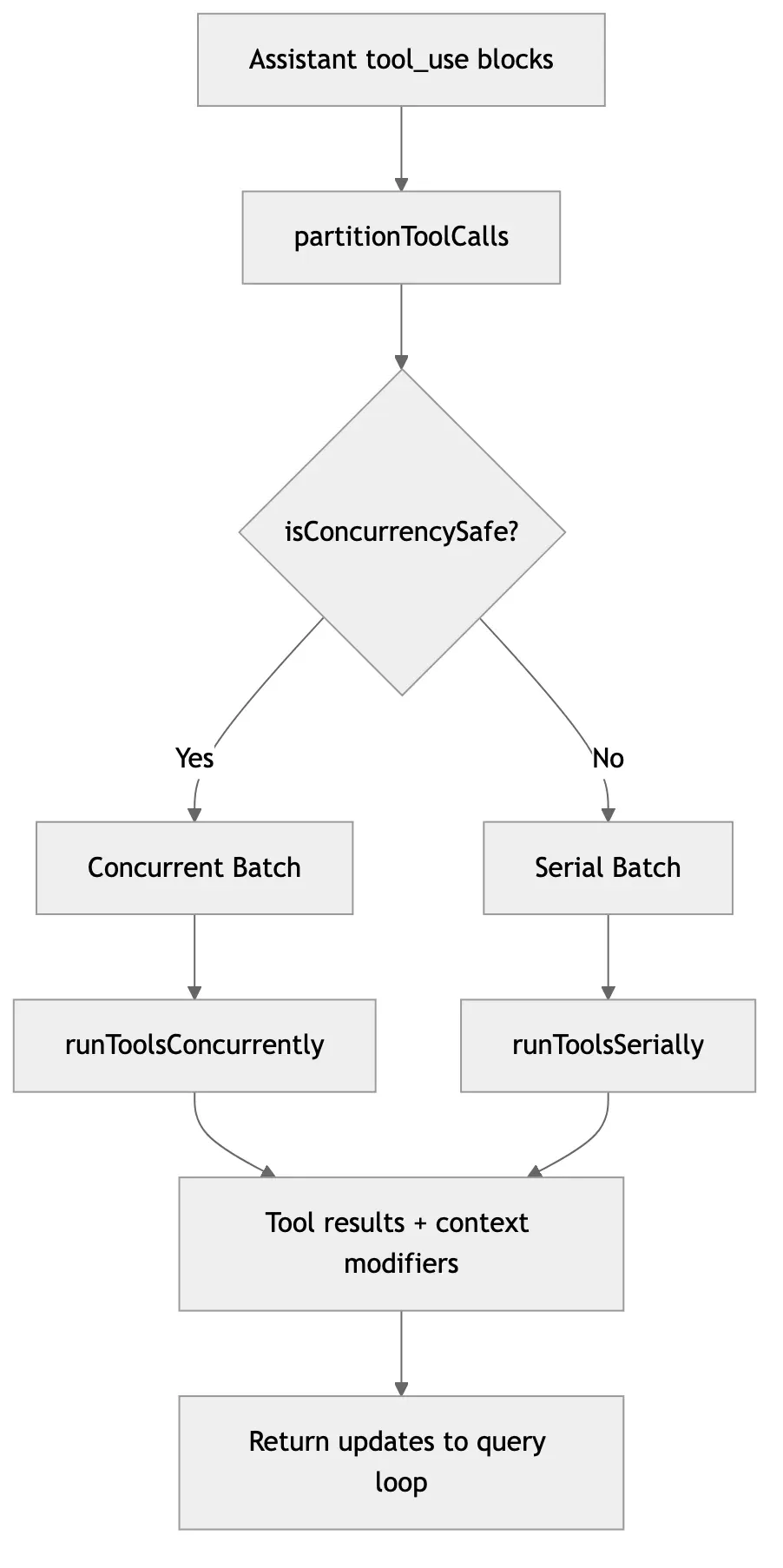

The core of toolOrchestration.ts isn’t just “enabling concurrency,” but partitionToolCalls(...).

It performs the following steps:

01 Iterates through tool_use in the assistant message.

02 Identifies the corresponding tool definition.

03 Parses the input using the tool schema.

04 Calls the tool’s isConcurrencySafe(...) method.

05 Partitions the tool calls into batches sequentially.

The result:

- Consecutive, safe tool calls are grouped together and executed concurrently.

- Other calls fall back to serial execution.

This is more robust than a simple “read tools are concurrent, write tools are serial” rule, because the decision-making power is delegated to the tool implementation itself.

04

III. Why this design is advanced

1. It shifts complexity from the model to the runtime

Large models are not inherently good at precise concurrency control. You can tell the model “read operations can be parallel” in a prompt, but that is merely a suggestion, not a guarantee.

Claude Code avoids relying on the model to remember this, letting the runtime automatically handle safe orchestration during execution.

This is a common pattern in mature systems:

- The model is responsible for expressing intent.

- The runtime is responsible for ensuring execution strategy.

2. It preserves sequential semantics

Note that this doesn’t break all concurrent tools into an arbitrary topology; it batches them based on consecutive safe segments within the call sequence.

This is crucial because it preserves the local order of the assistant’s original reasoning. In other words, Claude Code pursues “being as fast as safely possible” rather than “extreme parallel reordering.”

3. It is naturally suited for code exploration

During the code exploration phase, a single round often involves numerous read-only calls. This is where concurrency yields the highest benefits.

Therefore, this design is particularly well-suited for a coding agent like Claude Code:

- Searching is much faster.

- State is less likely to be corrupted.

- The model doesn’t need to learn complex execution rules.

05

IV. The relationship between this highlight and query.ts

query.ts is responsible for extracting tool_use from the assistant’s output and calling runTools(...). However, the actual strategy for concurrency vs. serial execution is determined by toolOrchestration.ts.

This means Claude Code’s main loop isn’t just “see a tool, execute a tool.” Instead, it is:

01 Collect tool calls for the current round. 02 Plan batching at the runtime layer. 03 Feed execution results back into the main loop.

This upgrades the tool system from “immediate reactive calls” to a “scheduled execution phase.”

06

V. Why this is more valuable than a simple thread pool

Some systems claim to support concurrent tool calls, but in essence, they are just:

- Sending requests asynchronously.

- Using

Promise.all.

The problem with this implementation is that it ignores the semantics of the tools themselves.

Claude Code’s difference:

- Concurrency is not the default; it is confirmed by tool definitions.

- Context modifications are not applied out of order; they are converged via

contextModifier. - Tool execution remains within a unified system of permissions, hooks, and telemetry.

Therefore, it is not just a “faster tool executor,” but a semantic scheduler.

07

VI. Direct impact on user experience

1. Faster exploration

When an agent needs to search many locations or read many files, the response feels significantly smoother.

2. Reduced state contention

Editing operations remain conservatively serial, preventing file state conflicts.

3. The agent feels like a “system that gets things done”

Users see the agent quickly unfold a series of read-only explorations rather than mechanically checking one by one. This makes the system feel more like a skilled engineer rather than a single-threaded script.

08

VII. What is the cost of this design?

Of course, this design is not free.

1. Tool implementations must take responsibility for semantic declaration

Every tool must correctly implement its own concurrency safety check; otherwise, the scheduler cannot make the right decision.

2. Increased debugging complexity

Once context modifications originate from concurrent tools, the system must handle the application order of contextModifier more carefully.

3. Caution is needed for “seemingly read-only but actually side-effect-heavy” tools

If such a tool is incorrectly marked as concurrency-safe, the risks are significant.

Overall, however, this cost is worth it because the benefits directly impact the most frequent phase of usage: exploration.

09

VIII. Understanding the process in one diagram

10

IX. Conclusion

Claude Code’s “Semantic Tool Concurrency” is not a flashy feature, but it solves the most realistic and frequent conflict between performance and stability in multi-tool agents.

Its brilliance lies in:

- Not pushing the responsibility of concurrency onto the model.

- Not relying on hard-coded, crude classification lists.

- Not sacrificing sequential semantics for speed.

Instead, it brings tool semantics directly into the runtime scheduling layer.

In short:

It allows the agent’s tool usage to function like program scheduling without losing the natural flow of large model reasoning.