Digital Strategy Review | 2026

Federal AI Procurement Shift: Trump Administration Orders Agencies to Phase Out Anthropic Models | Uncle Fruit’s AI Daily

By Uncle Fruit · Reading Time / 8 Min

Editor’s Note

Over the past 48 hours, the most significant news in the AI world has shifted from the “model capability race” to “national-level procurement and governance allocation.”

On March 3 (U.S. time), multiple media outlets reported that the Trump administration has ordered federal agencies to phase out Anthropic models and migrate critical contracts to the OpenAI ecosystem. The true significance of this move lies not in who won a contract, but in “who gets to define the boundary conditions for AI in the public sector.”

For product and growth teams, this event directly impacts three areas: how compliance requirements are set, how enterprise procurement is selected, and the evidence of controllability you must provide in government and large-scale enterprise tenders.

01

Today’s Headline News

Event Timeline

On March 2, Anthropic released “National Security and American Leadership,” publicly stating its position of “supporting national security cooperation while maintaining red lines.” The document emphasized that its models would be used for defense, intelligence analysis, and cybersecurity, while maintaining safety restrictions on high-risk use cases.

On the same day, OpenAI released “OpenAI’s DoD Partnership: Policy Update” and “OpenAI’s U.S. Defense Department Contract: FAQ,” clarifying the boundaries of its partnership with the U.S. Department of Defense and reiterating policy clauses “prohibiting use for mass surveillance and autonomous lethal weapons.”

By March 3, Reuters, the Financial Times, and The Washington Post reported that the White House had directed federal agencies to proceed with the phase-out and migration of Anthropic models. Some reports suggested that the migration window for the first phase of the switch might be set for April 30.

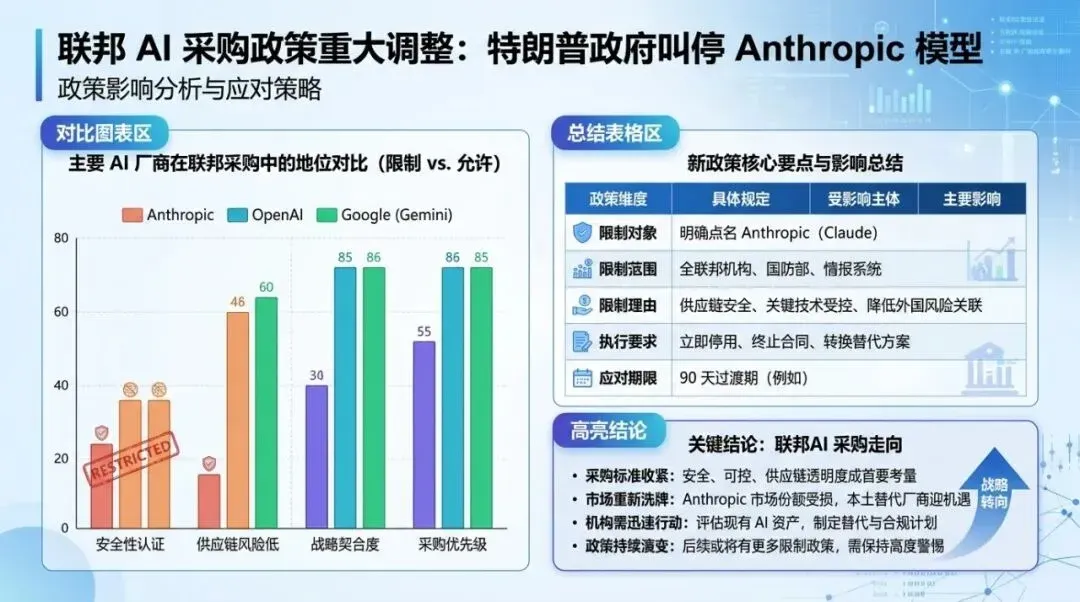

Key Fact Breakdown

01 This change is occurring at the “federal procurement level,” affecting budgets, contracts, compliance, and chains of accountability, rather than being a test project for a single department.

02 The core of the controversy centers on “whether to accept unrestricted military access” and “who gets to set the safety red lines.”

03 OpenAI simultaneously released policy clarifications, sending a clear message: cooperation can expand, but policy constraints must be written into contracts and execution mechanisms.

Why This is Today’s Headline

The impact of this news extends far beyond an “AI company PR war.” It will rewrite the evaluation framework for large models in the public sector: upgrading from “is the model performance strong?” to “is the model governance trustworthy, is accountability traceable, and is the supply replaceable?”

To put it bluntly, the federal procurement system is telling the market with real money that the key to winning the next stage is “governance capability.”

02

Headline Analysis: Why This Matters

1. Business Level: Federal Procurement is Redefining the Threshold for SaaS AI

Over the past year, many teams operated under the assumption that if their model performance led the market, they could capture more B2B scenarios. That logic is clearly no longer sufficient. Federal agencies and large regulated enterprises are shifting their evaluation criteria to three areas:

• Can you provide verifiable safety policies and violation handling mechanisms?

• Do you support fine-grained auditing (who called it, when, and what was the result)?

• When policies change, can your system complete a migration or downgrade operation within 30–90 days?

This means the industry will see a “valuation logic shift”: the premium on pure model capability will be compressed, while platform delivery, compliance capabilities, government relations, and ecosystem stability will be repriced.

2. Policy Level: AI Governance Enters the “Contractual Execution” Phase

Many people interpret AI governance as a statement of principles. More accurately, governance truly takes effect through contract clauses, procurement processes, acceptance criteria, and audit cycles.

Looking at the actions from March 2 to March 3, Washington’s direction is clear:

• High-risk scenarios must have clearly defined, written boundaries;

• Suppliers must accept continuous auditing;

• In the event of policy conflicts, the government will prioritize controllability and replaceability.

This logic is likely to spill over into critical industries such as finance, healthcare, and energy. The first question a major client asks you in the future may not be “what is your inference speed,” but “how do you ensure we don’t go offline when regulations change?“

3. Technical Level: Model Companies Will Be Forced to Strengthen “Operational Governance”

Today, most teams talk about “safety” only in terms of policy documents. The next stage requires engineering implementation:

• Task-level permissions and denial policies (dynamically executed by role, scenario, and risk);

• Audit logs enabled by default and exportable;

• Dual-track mechanisms for sensitive scenarios (model suggestion + human review + traceable signature chain);

• Emergency switching capabilities (ensuring business continuity when replacing suppliers).

If you cannot build these capabilities, it will be difficult to enter the core systems of governments and ultra-large enterprises.

4. Ecosystem Level: Multi-Model Coexistence Will Become the New Normal

This event also sent a practical signal: the architectural risk of being tied to a single model has been made explicit. More and more enterprises will build architectures featuring “multi-model routing + unified security layer + unified observability layer,” with the goal of diversifying policy and supply risks.

From an industrial perspective, this will catalyze a new market for the middle layer: model gateways, policy orchestration, audit platforms, and compliance template services.

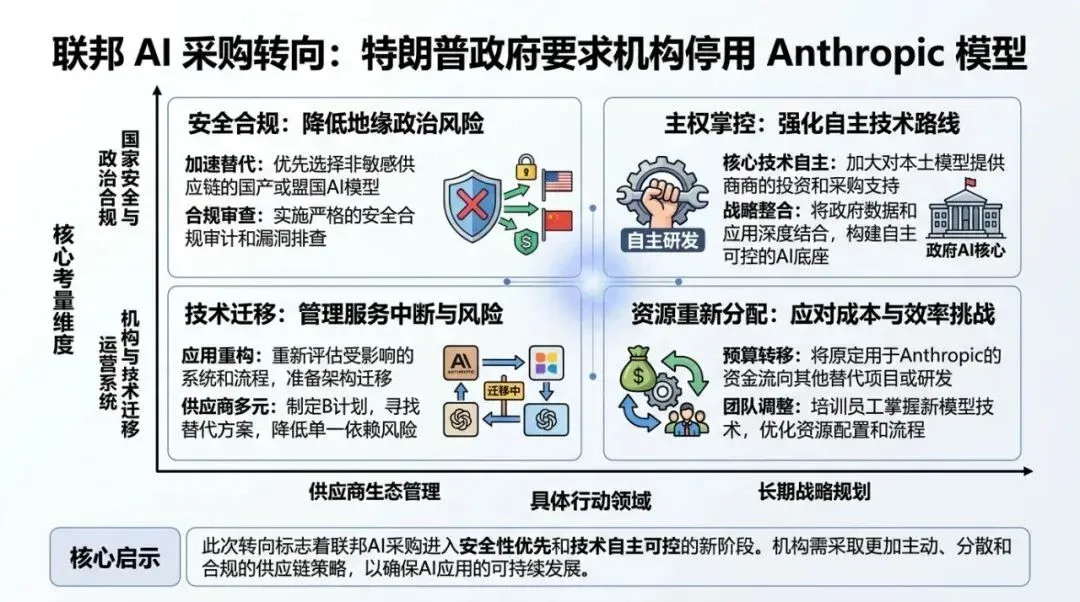

Flowchart used to explain the methodology execution path.

03

Uncle Fruit’s Perspective

I have a very pragmatic assessment for teams building AI products: the core challenge of enterprise-level competition in 2026 has shifted from “building a smarter model application” to “building an AI system that is auditable, replaceable, and resilient.”

I suggest you push forward with three things this week:

01 Conduct a “Compliance Stress Test”: Run your current core processes through a scenario: If your primary model is restricted by regulators within 30 days, can you replace the model and maintain SLA without changing business logic? If you can’t, add a middle layer immediately.

02 Convert safety policies from documents to a policy engine: Write “prohibited scenarios, downgrade conditions, and human review thresholds” as machine-executable rules so they take effect automatically during calls, rather than relying on human memory.

03 Equip your sales and customer success teams with a “Governance Pitch”: What clients really care about now is how you ensure stable service amidst policy fluctuations. Clearly explain your auditing, rollback, and migration capabilities, and your closing efficiency will significantly improve.

Three indicators to watch over the next 90 days:

• Whether more explicit AI compliance templates are formed on the federal procurement side;

• Whether top model vendors disclose more granular defense/public sector policies;

• Whether large enterprise RFPs list “replaceable architecture” as a hard requirement.

If these three indicators strengthen simultaneously, the main battlefield of AI engineering will officially enter the “Governance Engineering” cycle.

Data chart used to explain key comparisons and conclusions.

04

Other Headline News

Google vs. SerpAPI Litigation Enters Procedural Defense Phase

The dispute surrounding search scraping, data usage, and service boundaries continues to escalate. For SEO and data product companies, such cases will continue to impact the API ecosystem and compliance costs for data collection. Source: https://serpapi.com/blog/google-v-serpapi-motion-to-dismiss-why-were-in-the-right/

AI Correctness Issues Become More Evident in High-Value Tasks

John D. Cook’s case study discusses the risk of models being “plausible but factually incorrect” in professional financial contexts, serving as a direct warning for the rollout of automated research and financial Copilots. Source: https://www.johndcook.com/blog/2026/03/02/an-ai-odyssey-part-1-correctness-conundrum/

LLM Personality Engineering Moves from Prompting Tricks to Procedural Methods

The developer community is shifting “personality design” from inspiration-based writing to structured templates, which will lead to higher consistency for agents in customer service, sales, and education, and make A/B evaluation easier. Source: https://seangoedecke.com/giving-llms-a-personality/

AI Content Quality Controversy Continues to Grow, Increasing Platform Governance Pressure

The “AI slop” discussion has spread from creator communities to distribution platforms and brands. Expect to see more platforms increase the weight of source credibility and original signals in their recommendation strategies. Source: https://pluralistic.net/2026/03/02/nonconsensual-slopping/

Transitive Trust in the AI Supply Chain Re-examined

The engineering community is beginning to emphasize the systemic risks of dependency chains, build chains, and package management naming strategies. For AI application teams, this will drive SBOMs, signature verification, and least-privilege deployment to become standard practices. Source: https://nesbitt.io/2026/03/02/transitive-trust.html

Matrix chart used to illustrate applicability boundaries and strategy selection.

05

Trends and Opportunities

1) Opportunity: Infrastructure for “Governable AI” will see growth

Model routing, policy engines, auditing and logging, and risk-graded execution—capabilities that were once “customized for major clients”—will be rapidly productized. Whoever turns these into standardized components first will capture the new share of 2026 enterprise budgets.

2) Opportunity: Compliance narratives will become the main sales battlefield

Today, many teams’ market narratives are still stuck on “faster and stronger.” The more effective narrative moving forward is “providing certain delivery in an uncertain policy environment.” This will directly impact sales cycles and renewal rates.

3) Risk: Single-model binding will become an operational risk

If you tie your core processes to a single model and a single supply chain, fluctuations in policy, contracts, or pricing could shatter your cost structure. Multi-model architectures and policy abstraction layers should be implemented as soon as possible.

4) Risk: Building features without auditing will lead to losing key clients

Major clients now want systems that are “deployable, explainable, and accountable.” Simply showing demo effects will become increasingly difficult to pass through procurement and risk control reviews.