Digital Strategy Review | 2026

Gemini 3.1 Music Creation Review: How Far Is It from a Professional Music Model?

By Mr. Guo · 8 Min Read

Foreword

Those who know me are aware that I am a bit of an oddity—a professional musician turned software industry veteran. (My game soundtrack credits might actually reach more people than I do personally, such as the early versions of Happy Elements’ PopStar!).

Having been an early adopter of AI music applications and commercial experiments—and currently working on an AI SaaS project in the audio space—I felt it was my duty to conduct this review of Gemini 3.1’s “Create Music” capabilities!

If you’ve clicked “Create music” in Gemini recently, you’ve likely felt a mix of pleasant surprise and awkwardness.

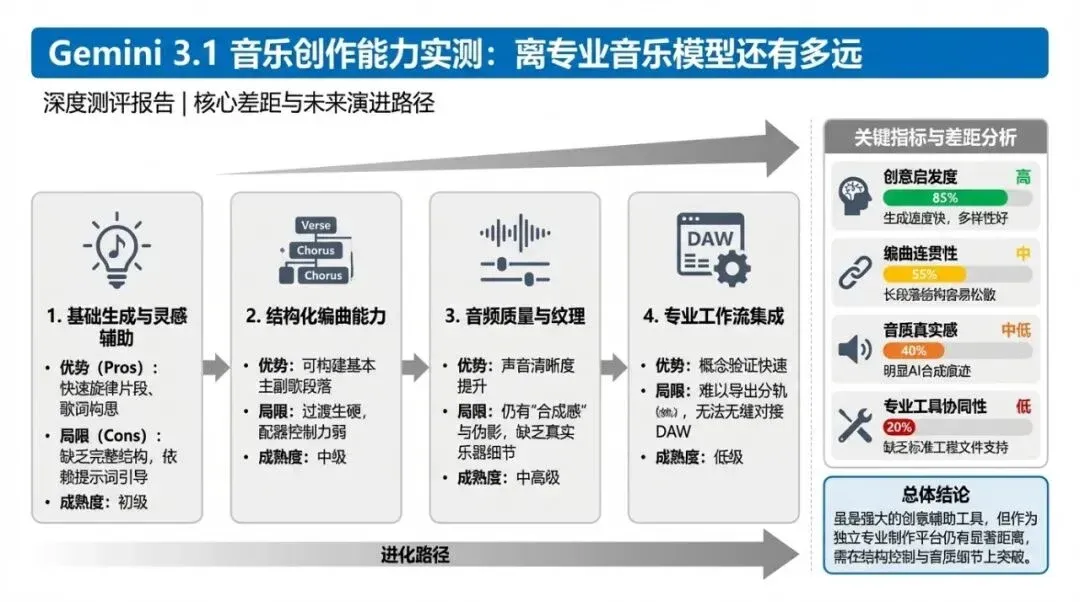

This isn’t a “feature update” post; it’s a hands-on review. I’ll lead with the conclusion: Gemini’s current music capabilities make it an excellent “semantic-driven music sketching tool,” but it is not yet a production system capable of reliably replacing professional music generation platforms.

01

A One-Sentence Definition: What Is It, Really?

Let’s clarify the terminology to keep our discussion on track.

Many say, “Gemini 3.1 now supports music creation.” Strictly speaking, the user experience is correct, but we must distinguish between two layers in the tech stack:

- 01: The Gemini App has added a new music generation tool.

- 02: The music model behind this tool is Lyria 3, not “Gemini text model composing music directly.”

As stated in the official release on February 18, 2026, the Gemini App has integrated Lyria 3, focusing on 30-second short tracks driven by text/images (and video in some scenarios), currently in beta.

This distinction is crucial because it dictates how you should use it:

- • Treat it as an “inspiration sketchpad,” and you’ll find it very useful.

- • Treat it as a “Digital Audio Workstation (DAW),” and you’ll be disappointed quickly.

02

How I Tested It

This round of testing didn’t just cover a single genre; I intentionally spanned different levels of complexity.

You can listen to the actual audio from the tests here:

- • Synthwave (Instrumental)

- Prompt: I like Synthwave electronic music. Please generate a track for me, instrumental only.

- • Chill Lounge Fusion Jazz (Female Vocals)

- Prompt: Generate a fusion jazz track suitable for a quiet lounge. Include female vocal humming; you decide the lyrics.

- • Dubstep (Explosive Rhythm)

- Prompt: Generate a Dubstep dance track with an explosive rhythm.

- • Guofeng Pop (Traditional Instruments + Opera Vocals)

- Prompt: Generate a Chinese-style pop song. Include traditional Chinese instruments, but keep it pop-oriented rather than pure traditional music. Include female vocal humming and sections with operatic vocal techniques.

- • Progressive Metalcore (Emphasizing Breakdown)

- Prompt: Generate a progressive metalcore track, highlighting the breakdown section.

- • Cinematic Fantasy Score (“Harry Potter Vibe”)

- Prompt: Mimic the theme of a Harry Potter-style score and generate a piece of cinematic music.

- • Impressionist Piano (“Debussy-esque + Late Romanticism”)

- Prompt: I want you to become a contemporary Debussy. Give me an impressionist piano piece similar to Clair de Lune, with a touch of late Romanticism.

- • Reference-Based Creation (Upload music for “style-based recreation”)

- Prompt: Mimic this music and create something in a similar style.

This wasn’t a test of “can it make sound,” but rather an evaluation of four critical factors:

- 01: Length and structural control.

- 02: Semantic adherence.

- 03: Style recognition and migration.

- 04: Musicality and production finish.

Flowchart illustrating the methodology path.

03

Core Conclusions (Read this first)

Conclusion 1: It’s still limited to 30-second clips, not a full-song pipeline.

The most critical finding: When you request a “full 3-minute track,” Gemini claims in its text response that it has “generated the full version,” but the actual capability remains capped at a short duration.

This aligns with official statements: the Gemini App currently delivers music clips of “up to 30 seconds.” It lacks the “sustainable expansion” link found in platforms like Suno—at least, you won’t find a mature “Extend/Continue” workflow in the app.

This is not a minor difference; it’s a fundamental product positioning gap:

- • Gemini Music is more like “rapid expression.”

- • Professional music models are more like “programmable production.”

Conclusion 2: Strong semantic understanding; light prompts keep it “on track.”

Your tests are very persuasive here. Many of the prompts provided weren’t “engineered,” yet the model generally stayed on the right path:

- • Synthwave felt neon-electronic.

- • Guofeng Pop provided that traditional texture.

- • Lounge Fusion Jazz was softer and more laid-back.

This doesn’t necessarily mean the “music modeling” itself is incredibly powerful; rather, the combination of Gemini’s semantic understanding and Lyria’s generation makes “intent translation” very smooth. For the average user, this is a massive barrier-to-entry win: you don’t need to learn complex prompt syntax to get listenable results.

Conclusion 3: It currently lacks reliable “reference-based creation” capabilities.

When you uploaded reference music to request a similar style, the results were “mismatched.” I believe this observation is critical and one of the most valuable parts of this article.

My assessment (based on behavioral and documented capability boundaries) is:

- • The main path for Gemini App’s “Create music” remains semantic-driven.

- • It supports image/video semantic inspiration, but makes no public promise of “high-fidelity style transfer” for uploaded audio.

- • When you feed it audio, it likely utilizes Gemini’s general multimodal understanding to map it into a new music generation request.

- • This pipeline is good at “understanding the content theme” but poor at “precisely replicating musical style fingerprints.”

The result: it knows the “vibe” you want, but it can’t grasp the “structural details” of the sound.

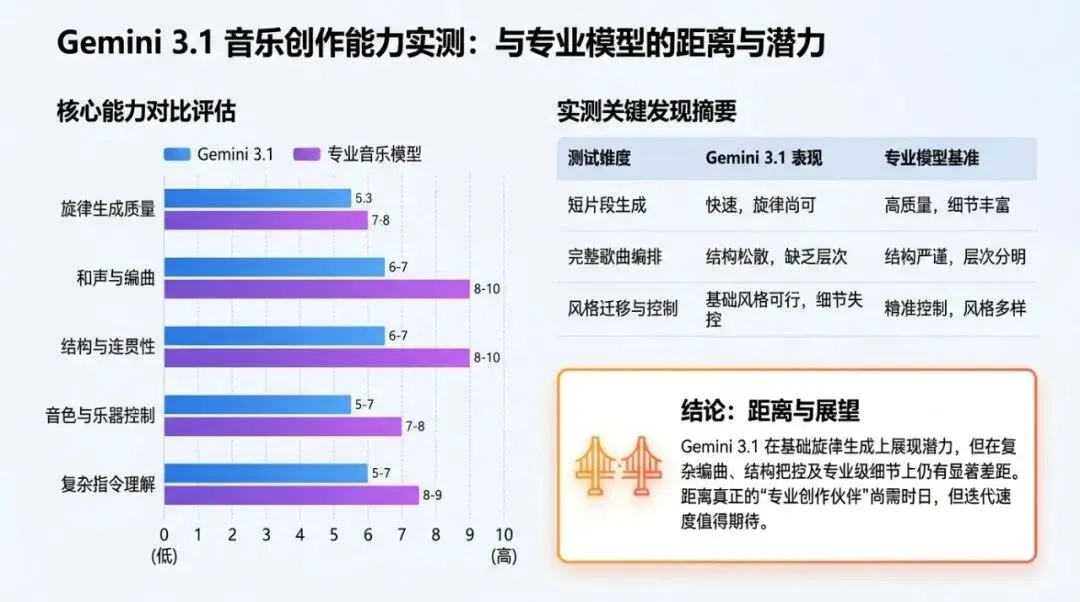

Conclusion 4: Quality and compliance haven’t reached the level of professional music models.

Your genre-specific evaluations are professional and accurate:

- • Guofeng Pop: Direction is right, but the operatic vocal technique wasn’t truly delivered.

- • Dubstep: Contains signature elements, but musicality and melodic organization are noticeably weak.

- • Progressive Metalcore: It sounds “heavy,” but the style is off, and the breakdown instruction wasn’t effectively highlighted.

This shows that while the model is decent at “label-level style hits,” it is unstable in professional areas like “sub-genre syntax, structural section control, and detailed performance style.”

For casual creators, this is “usable”; for music producers, it is “for reference, not for reliance.”

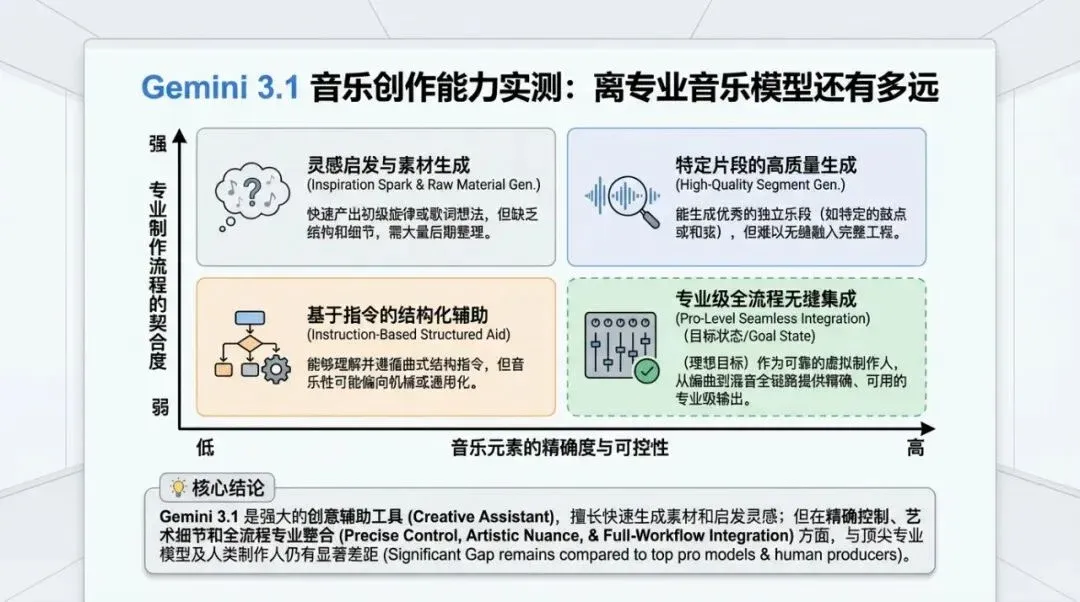

Data chart explaining key comparisons and conclusions.

04

The Details Most Worthy of Industry Attention

1) Safety policies are explicitly affecting the creative experience.

When you requested “mimic Harry Potter” or “mimic Debussy’s Clair de Lune,” you encountered typical copyright/style-mimicry guardrails:

- • Sometimes it directly refuses to “mimic specific IPs/composers.”

- • Sometimes it provides an alternative with a “similar atmosphere but original expression.”

Official documentation confirms this: Lyria 3 is designed for original expression, not direct imitation of living or existing artists. Even if you name a specific artist in your prompt, it is treated as “general inspiration.” (Though, honestly, I find this a bit hypocritical—isn’t “original expression” just a product of training sets from contemporary or existing artists?)

2) “Being able to talk” does not equal “being able to arrange a full track.”

Gemini’s response text is very comprehensive, often mentioning “grand symphonic elements, segmented progression, climax building, and spatial reverb.” However, listening to the results, these descriptions don’t always align with the audio output.

This is a common issue in many current multimodal products:

- • Text explanation capability is strong.

- • Generation results are still unstable in the details.

Therefore, when testing music models, don’t just look at “how it describes itself”—always judge by the audio results.

Matrix chart illustrating applicability boundaries and strategy selection.

05

An “Usability Map” by Creative Scenario

A. Scenarios ready for use now

- 01: Social media short-form BGM (under 30 seconds).

- 02: Inspiration sketching and style exploration.

- 03: Low-barrier composition experience for non-professionals.

- 04: Quickly creating mood-board demos for team communication.

B. Barely usable, requiring heavy manual intervention

- 01: Short tracks requiring specific sub-genre linguistic details (e.g., heavy sub-genres).

- 02: Works requiring strict structural control.

- 03: Works with specific performance style requirements for vocals.

C. Scenarios not recommended for reliance

- 01: Full 3-4 minute commercial songs in one go.

- 02: High-consistency reference style migration/re-arrangement.

- 03: Production workflows requiring release-grade arrangement and mixing quality.

06

Practical Advice for Creators (Use it now)

Suggestion 1: Treat it as an “ideation layer,” not a “final master layer.”

The correct workflow isn’t “let it output the final product,” but:

- • Use Gemini to quickly test directions.

- • Select 1-2 of the most promising motifs.

- • Move to professional tools for structural expansion, arrangement refinement, and mixing/mastering.

Suggestion 2: Write “functional instructions” in prompts, not just “aesthetic adjectives.”

Instead of just writing “explosive Dubstep,” add:

- • Target tempo range (BPM).

- • Sectional intent (intro / build / drop / breakdown).

- • Instrument roles (sub bass, growl lead, snare pattern).

- • Vocal type (if needed).

Even if it doesn’t hit 100%, this will significantly reduce the probability of it going off-track.

Suggestion 3: Break “genre correctness” into verifiable items.

Your most professional move was not being fooled by the rough dimension of “does it sound like electronic music,” but checking:

- • Does it actually possess the key syntax of that sub-genre?

- • Are the specified sections highlighted?

- • Is the musicality sound?

This verification framework is worth reusing directly.

07

A Few Blunt Words for the Product Team

If Gemini wants to move music capabilities from “fun” to “production-ready,” it needs to address three things:

- 01: Length and structural control: Moving from 30 seconds to controllable expansion.

- 02: Reference audio migration: Not just “understanding semantics,” but “understanding sound style structure.”

- 03: Sub-genre granular adherence: Not just hitting broad category tags, but hitting specific writing syntax.

Every step forward in these three areas will elevate the creator’s perception of the tool.

08

Final Conclusion

From the perspective of a former professional musician, I agree with your overall assessment:

- • Gemini’s current music capability has a stronger sense of direction than completion.

- • Semantic adherence is “fast and convenient,” but there is still a significant distance from professional music models.

- • Its most valuable position is at the front end of the creative process, not the back end.

In other words: It is already a great “music creativity engine,” but not yet a “reliably deliverable music production factory.”

If you set your expectations there, you’ll find it very useful; if you treat it as a direct replacement for Suno/Udio, you will be consistently disappointed.

09

Appendix: External Information Verification (For Fact-Checking)

- 01: Google Workspace Updates (2026-02-18): Gemini App integrates Lyria 3, 30-second music generation, 8 languages, 18+ https://workspaceupdates.googleblog.com/2026/02/create-custom-soundtracks-with-lyria-3.html

- 02: Google Keyword (2026-02-18): Lyria 3 beta live in Gemini App; emphasizes original expression, no direct artist imitation; includes SynthID https://blog.google/innovation-and-ai/products/gemini-app/lyria-3/

- 03: Gemini Official Music Page: Lyria 3 generates 30-second tracks in Gemini (including vocals/lyrics/cover art) https://gemini.google/us/overview/music-generation/

- 04: Gemini Apps Help: Current tracks “up to 30 seconds,” supports download/sharing, quota limits apply https://support.google.com/gemini/answer/16901237

- 05: Gemini API Documentation (Lyria RealTime): Real-time streaming, sustainable steering, experimental model, currently instrumental only https://ai.google.dev/gemini-api/docs/music-generation

- 06: Google DeepMind Lyria 3 Model Card: Text input, music+lyrics output, evaluation dimensions, and safety governance framework https://deepmind.google/models/model-cards/lyria-3/

- 07: Suno Help (Comparison for “full song/extension capability”): Official Extend workflow documentation https://help.suno.com/en/articles/2409601d