Digital Strategy Review | 2026

Google Releases Nano Banana 2: Image Generation Hits the “High-Quality, Low-Latency” Inflection Point | Uncle Fruit AI Daily

By Uncle Fruit · Reading Time / 8 Min

Foreword

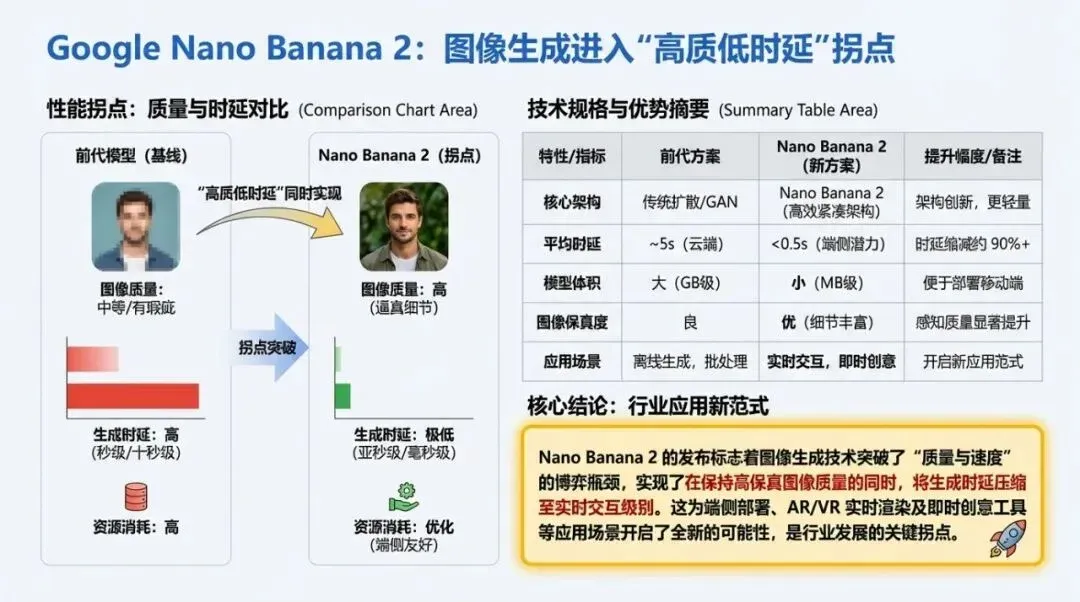

In the wave of AI developments on February 26, 2026, the most headline-worthy news isn’t “yet another new model,” but rather Google taking a massive leap forward in resolving the long-standing “quality vs. speed” trade-off in image generation. Nano Banana 2 (Gemini 3.1 Flash Image) is not just an incremental parameter upgrade; it brings Pro-level image capabilities down to Flash-level response speeds, while simultaneously integrating them into practical traffic entry points like Gemini and Search. For content, product, and growth teams, this signifies a shift in how AI imagery is used—from an “offline production tool” to an “online interactive capability.” The industrial value here lies not in having one more model, but in the reopening of a viable commercial path.

Uncle Fruit’s PS: All images in this article were generated by the latest Nano Banana 2. This is the best test possible; there is essentially no significant gap compared to the previous Nano Banana Pro.

01

Today’s Headline News

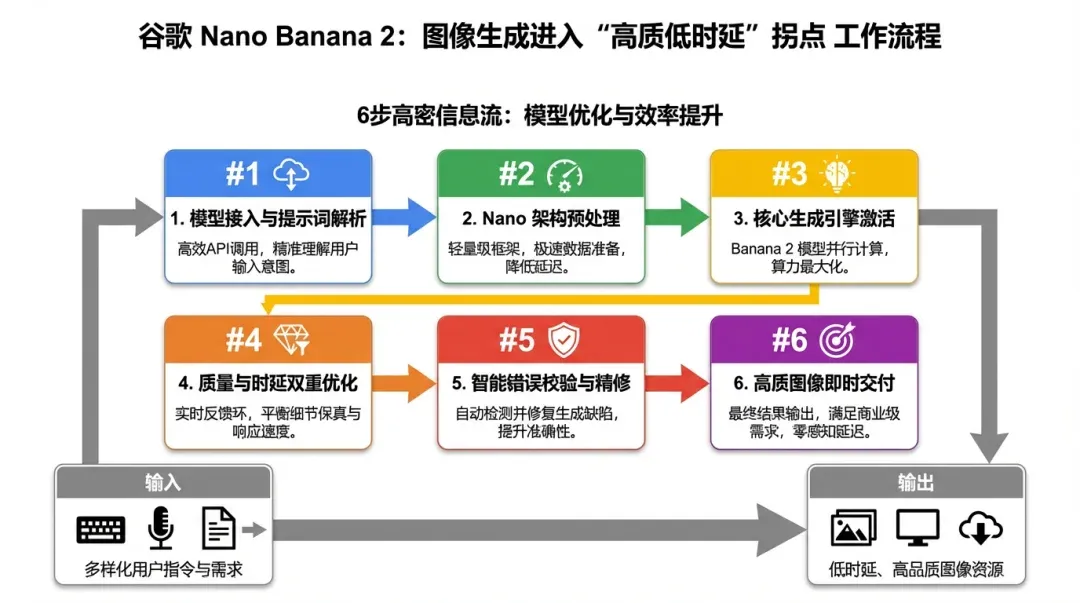

Google officially released Nano Banana 2 (Gemini 3.1 Flash Image) on February 26, 2026, with a very clear core positioning: migrating the high-fidelity, complex instruction comprehension, and subject consistency capabilities previously emphasized in the Pro tier into the low-latency experience of the Flash tier. The official statement can be summarized in one sentence: You no longer have to sacrifice “better” for “faster,” nor “faster” for “better.”

There are at least four key facts from this release worth noting. First, the capability structure has shifted. By bundling advanced world knowledge, production-grade specifications, subject consistency, and enhanced editing iteration capabilities into a single model line, Google indicates this isn’t just a visual improvement for “image quality,” but an upgrade toward “continuous iteration in production environments.” Second, the entry points for deployment are broader. Beyond Gemini, Google has linked these capabilities with Search scenarios, particularly strengthening the “multi-object recognition + multi-step planning” visual search experience in high-frequency entry points like Circle to Search. Third, the barrier to entry for developers has been further lowered. Google provided clear access paths in its developer update: Gemini API, Google AI Studio, Vertex AI, Firebase, and Antigravity can all utilize this capability line. Fourth, the price-to-performance ratio has been moved to the forefront of the narrative. The company didn’t market it as the “best in the lab,” but emphasized its “scalable deployment” cost-effectiveness and iteration efficiency, which usually means the target audience is no longer just model enthusiasts, but teams looking to launch products.

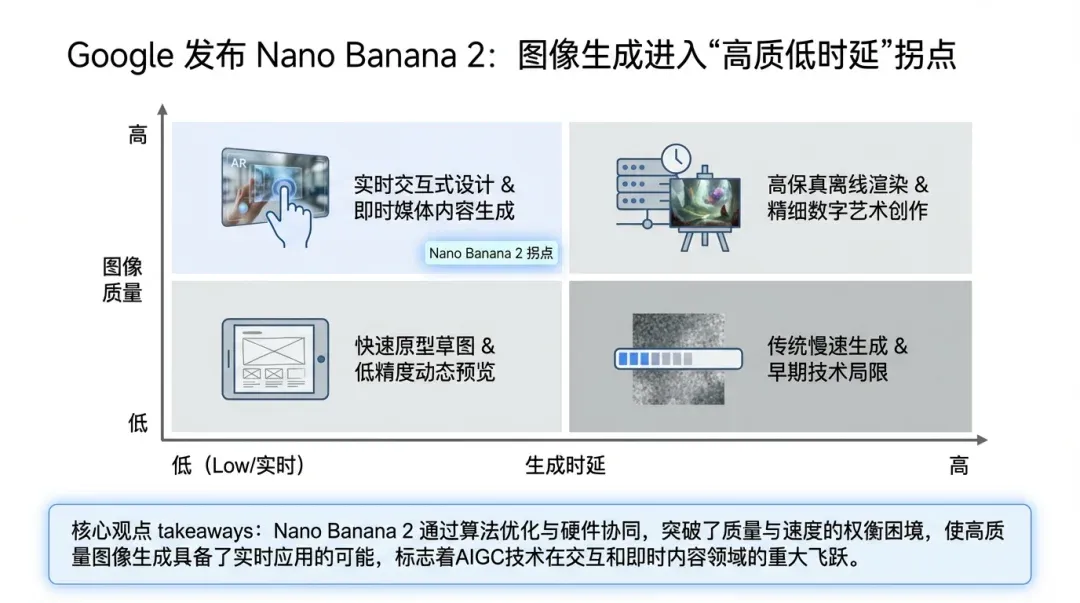

If we view this release within the context of the image generation race over the past two years, the key significance of Nano Banana 2 is that the “capability stratification is beginning to collapse.” Previously, many teams split their workflows into two stages: using fast models for exploration and slow models for finalization. Now, Google is explicitly pushing for “completing exploration and deliverable output in a single pipeline.” This will directly change product experience design. In the past, user tolerance for AI imagery was “wait a while”; in the future, the default expectation will become “instant feedback like a search,” with the hope that results possess the usability of professional tools.

Based on today’s public signals, Google’s choice to push this capability to a wider user and developer ecosystem at the end of February doesn’t look like an isolated release, but rather an acceleration of its 2026 multimodal strategy. Externally, it is a model update; internally, it is a rhythmic beat for its “product-platform-ecosystem” trinity. If you only understand it as “the image model got better,” you are underestimating the commercial consequences of this change.

02

Headline Analysis: Why This Matters More

The first layer of impact is the reconstruction of product forms. In the past, image AI could only act as a “back-end asset generator” in many scenarios because slow generation, long editing loops, and unstable controllability made front-end interactive experiences feel clunky. Now, if Flash-level speed can be stably combined with Pro-level quality, product teams can move image capabilities to the core steps of the user journey—such as real-time generation of display images on e-commerce product pages, instant generation of explanatory diagrams in educational scenarios, or marketing systems automatically producing multi-version assets for small-scale A/B testing before a campaign launch. Once this “real-time interactive visual generation” is established, growth methods will shift from a “material factory mindset” to a “conversational creative mindset.”

The second layer of impact is the reordering of organizational division of labor. Previously, “creative teams produce assets, engineering teams integrate models, and operations teams distribute them” was a serial process with many nodes and slow feedback. With improved model speed and editing capabilities, creative, product, and engineering teams will be closer to “same-screen collaboration”: creatives adjust styles directly in the business backend, engineering only maintains capability boundaries and cost guardrails, and operations writes back prompt strategies based on conversion data. Whoever builds this closed loop first will be the first to reap the “compound interest of high-frequency iteration.”

The third layer of impact is the change in industry competition standards. In the past, the outside world often evaluated image models based on “who draws better,” but in 2026, the metrics that truly determine commercial success are shifting to “who enters the main product traffic path faster, whose total cost is more controllable, and whose results are more stable in continuous tasks.” Nano Banana 2 puts “speed + quality + scalable access” on the same line, exerting systemic pressure on competitors rather than just single-point parameter pressure.

The fourth layer of impact is ecosystem synergy. Google’s updates on the same day are not limited to the model itself, but form synergies with Search’s multi-object recognition, new hardware experiences on Android, and developer platform access. This means they are not doing “model brand promotion,” but “embedding model capabilities into existing high-traffic products and developer ecosystems.” Any startup building an AI product should pay attention to this approach: the model itself is just the starting point; the real barriers are distribution entry points, data feedback chains, and engineering stability.

I believe this is important because it translates “technological progress in image AI” into “reconfigurable business processes.” The real watershed is not the model leaderboard, but whether enterprises have started treating image generation as real-time infrastructure. If your organization still treats image AI as an occasional content outsourcing tool, you will likely be significantly outpaced in efficiency, cost, and iteration speed over the next 6 months.

Flowchart used to explain the methodology execution path.

03

Uncle Fruit’s Perspective

My judgment is: Nano Banana 2 is not a “nice-to-have” tool update, but a strategic signal that it is time to “rebuild your image workflow.” For most teams in content, e-commerce, and product growth, three things should start today.

First, recalculate your image production pipeline immediately. Break down “inspiration output - asset generation - version iteration - release feedback” into quantifiable steps, and confirm which segments can be directly replaced by real-time generation and which still require strong human control. The point is not “full automation,” but automating the most time-consuming, repetitive, and template-ready nodes first.

Second, build a “model capability assessment table”—don’t just look at subjective image quality. At least evaluate five dimensions simultaneously: time-to-first-frame, complex instruction following, subject consistency, editing controllability, and total cost per thousand tasks. If a model is visually stunning but has high iteration costs and unstable output, it will eventually slow down the team’s rhythm.

Third, upgrade “prompt engineering” to “operational asset management.” Many teams treat prompts as personal experience, which is inefficient. A more sustainable approach is to build a library of templates, scenarios, and failure cases, and bind conversion data back to template versions. This way, you gain organizational capability rather than just the “feel” of a specific colleague.

I also want to point out a realistic issue: when image generation speed and quality improve simultaneously, the market will rapidly homogenize. What truly sets you apart is no longer “can you generate,” but “do you understand business goals and continuously produce convertible visuals.” So, don’t get obsessed with new model features themselves; integrate them into your growth loop. For teams that already have a certain scale of content, today is the window to redesign your production system.

Data chart used to explain key comparisons and conclusions.

04

Quick Glance at Other Key News

1) Google API Keys Silent Privilege Escalation Vulnerability Triggers Security Alert

Fact: Truffle Security disclosed on 2026-02-25 that Google API Keys historically embedded in public web pages could be silently granted sensitive access capabilities after enabling the Gemini API for the same project, creating a privilege escalation risk. Impact: This is not a “single team configuration error,” but a systemic risk that many existing projects might fall into, exposing both security and costs. URL: https://trufflesecurity.com/blog/google-api-keys-werent-secrets-but-then-gemini-changed-the-rules

2) tldraw to Test Migration to Private Repositories, Open Source Business Model Under Pressure

Fact: Discussions around AI’s ability to reconstruct implementations via test suites are heating up, and tldraw’s related actions are widely interpreted as “the open-source moat in the AI era is being reassessed.” Impact: Future competition for open-source projects will not just be about code quality, but also licensing strategies, ecosystem relationships, and commercial closed loops. URL: https://simonwillison.net/2026/Feb/25/closed-tests/

3) OpenAI and PNNL Collaborate to Advance AI in Federal Approval Processes

Fact: OpenAI announced a partnership with the Pacific Northwest National Laboratory (PNNL) on 2026-02-26 to establish evaluations around tasks like NEPA document drafting, with public results showing significant time savings in some tasks. Impact: AI is moving from “office efficiency” to “high-regulation, high-responsibility processes,” with deepening implementation in government and industrial scenarios. URL: https://openai.com/index/pacific-northwest-national-laboratory/

4) OpenAI Codex and Figma Connect code-to-design Round-trip Link

Fact: OpenAI announced a new integration with Figma on 2026-02-26, using an MCP Server to make the round-trip between code and design canvases smoother. Impact: Product collaboration processes are shifting from “design-first or development-first” to “parallel iteration,” further blurring organizational boundaries. URL: https://openai.com/index/figma-partnership/

5) Circle to Search Upgrades to Multi-Object Retrieval, Visual Search Takes Another Step Forward

Fact: In its 2026-02-25 update, Google emphasized that Circle to Search can understand and retrieve multiple objects in an image in a single interaction. Impact: Visual understanding capabilities are beginning to directly support consumer decision-making chains, and search, e-commerce, and advertising scenarios will become more tightly integrated. URL: https://blog.google/products-and-platforms/products/search/circle-to-search-february-2026/

Matrix chart used to illustrate applicability boundaries and strategic choices.

05

Trends and Opportunities

Looking ahead to the next 2-4 weeks, I see three high-certainty trends. First, the image generation track will shift from “model competition” to “product experience competition.” Any application driven solely by model fame will struggle; the real opportunities lie in products that deeply bind image capabilities with specific business actions. Second, security governance will become a prerequisite for growth. The API Key incident has shown that the faster AI capabilities are integrated, the more authority and key management must be prioritized; without this, the faster you grow, the greater the risk. Third, cross-role collaboration tools will continue to accelerate. Whether it’s code-to-design or the linkage between image generation and search, the essence is driving the integration of “creative, engineering, and operations.”

Regarding specific opportunities, I have advice for three types of teams:

For content and brand teams: Build a “hot-topic response visual factory,” iterating multi-version assets for the same subject matter on an hourly basis and converging quickly based on channel feedback.

For e-commerce and growth teams: Prioritize the “multi-object visual retrieval + instant asset generation” combination to reduce friction costs between inspiration and purchase.

For AI startup teams: Don’t get caught up in single-model capabilities; focus on “process-layer products that can be sustainably reused in business scenarios,” such as automatic template orchestration, asset evaluation, and feedback loops for ad delivery.

Closing thought: The true value of Nano Banana 2 is not making images look better, but shortening the road “from idea to launch.” Whoever engineers this road first will be the first to capture the next round of efficiency dividends.