Over the past two years, discussions regarding AI’s impact on the internet have largely followed two distinct paths.

The first is content-centric: Will search be rewritten? Should SEO evolve into GEO (Generative Engine Optimization)? Will websites be drained of clicks by “answer engines”? The second is product-centric: Should WebApps integrate MCP (Model Context Protocol)? Should they build agents? Should they provide an AI entry point for users?

Google’s recently introduced WebMCP is the first initiative to weave these two threads together.

Its true significance lies not in “yet another protocol,” but in how it pushes the role of the webpage forward. Historically, webpages were objects to be browsed, indexed, clicked, and scraped. WebMCP aims to enable webpages to proactively expose their capabilities to AI—defining what tools they have, how they should be called, and which tasks are best delegated to machines. It is pushing the Web from a container of content toward a “layer of actionable capabilities.”

If this path succeeds, it won’t just affect browser teams. SEO, GEO, SaaS, PLG (Product-Led Growth), WebApp product design, growth strategies, and information architecture will all undergo a transformation.

Below, I will focus on answering six key questions:

- What exactly is WebMCP, and how does it differ from traditional MCP?

- Why is Google pushing this now?

- What structural changes will it bring to SEO/GEO?

- How should SEO practitioners prepare?

- How should PLG-driven WebApps start becoming “AI-friendly”?

- What are the most important signals to watch over the next two years?

The Bottom Line First

I’ll state my conclusion upfront so you don’t lose the thread.

I don’t believe WebMCP should be viewed as a niche experiment for browser enthusiasts or just “another AI interface for a webpage.” It is more like a piece of infrastructure for the agent era: allowing websites to be not just read by AI, but securely, structurally, and controllably invoked by AI on the frontend.

Once this takes hold, the competitive logic of the Web will shift from “who is easier to find” to “who is easier for AI to invoke correctly.”

This means:

- For SEO: Pages will no longer just answer questions; they must provide entry points for capabilities that agents can use.

- For GEO: While “citation eligibility” remains important, “invocability” will become a new tier of authority.

- For WebApps: Frontend design, state management, permission boundaries, and information architecture will, for the first time, need to be rewritten from the perspective of “how can an agent safely call me?”

What Exactly is WebMCP?

Let’s define it in plain language.

The official vision for WebMCP is to provide a standardized interface within the browser environment, allowing web developers to register specific features of their pages as “tools” that AI agents can invoke. These tools are not remote capabilities provided by a backend MCP server; they are exposed directly by the webpage’s own JavaScript.

The official specification page states clearly: WebMCP API enables web applications to provide JavaScript-based tools to AI agents. In simpler terms, the webpage can say: “I have these capabilities; if an AI needs to help a user complete a task, don’t just blindly click the DOM—call these tools I’ve defined.”

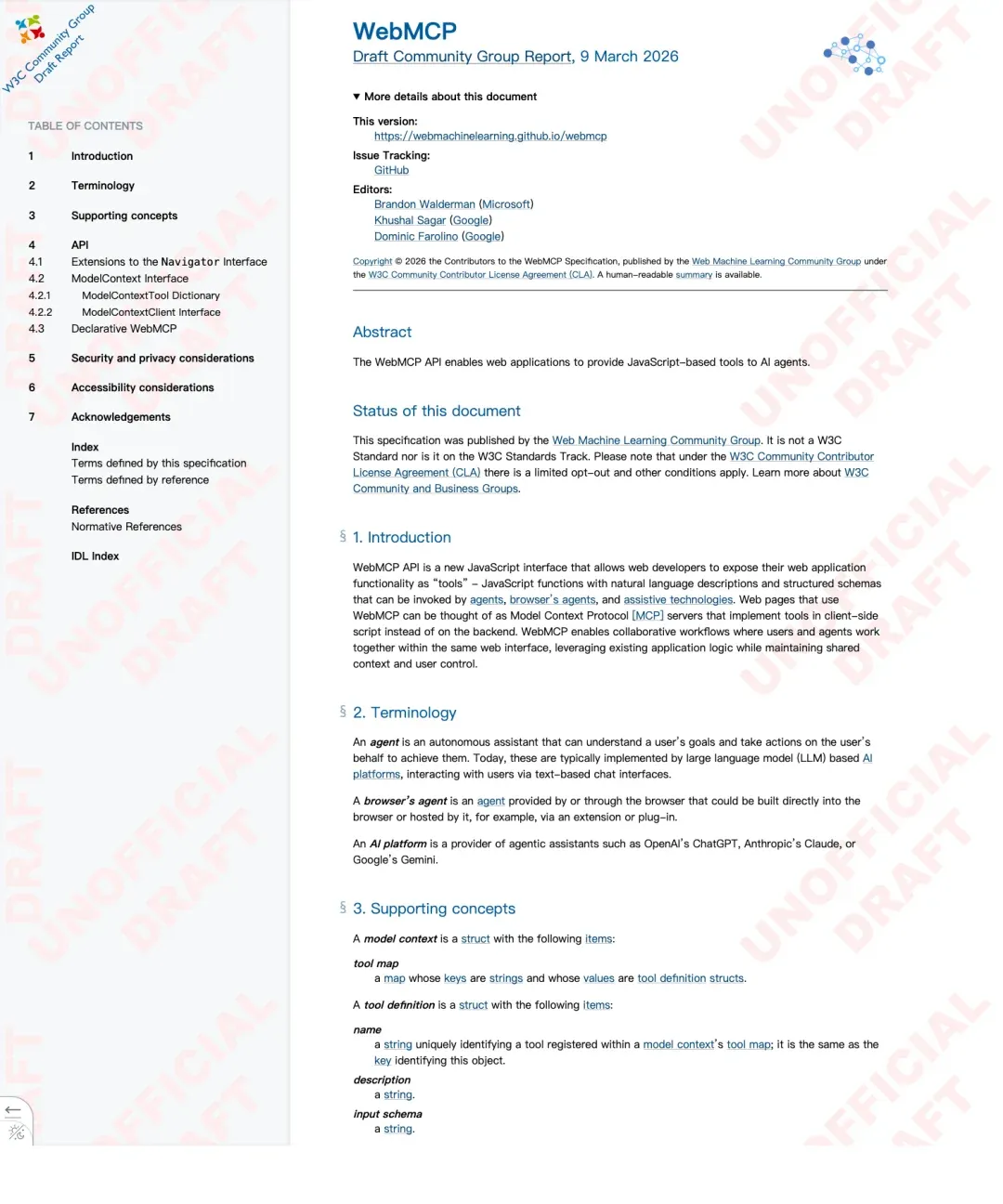

The most important takeaways from the image above are two key pieces of information:

- It is currently a Draft Community Group Report, meaning it is not yet a finalized W3C standard.

- The editors are from Microsoft and Google, indicating this isn’t one browser vendor playing in a silo, but an attempt to build broader Web consensus.

Source: https://webmachinelearning.github.io/webmcp/

If you read the specification, it defines WebMCP even more clearly: a webpage can be treated as an MCP server, but these tools are implemented via client-side scripts rather than the backend. This is crucial because it confirms that WebMCP focuses on “capability exposure,” not just “content discovery.”

In other words, the default relationship between traditional websites and AI is:

- AI reads me.

- AI summarizes me.

- AI cites me.

WebMCP attempts to add a new relationship:

- AI can invoke me.

This is why I find it significant. It means a webpage is no longer just a piece of text to be understood, but a capability to be triggered.

How Does It Differ from Traditional MCP, DOM Automation, and Browser Extensions?

When hearing “webpages provide tools to AI,” many think: “Isn’t that just MCP?” or “Isn’t that just letting agents click buttons and fill forms?”

It’s not the same thing.

1. Difference from Backend MCP

Traditional MCP is a protocol for the backend world. To connect tools to agents like ChatGPT, Claude, or Copilot, you typically need to set up an MCP server on the backend.

The problem is that for many web teams, this is costly. Much of your product logic—state, interaction, permissions, interface semantics, and immediate context—already lives in the browser. Building a parallel interface layer on the backend just to expose capabilities to agents is redundant.

WebMCP’s ambition is to avoid this overhaul: the webpage exposes existing capabilities in a standard way, and the AI invokes them via structured interfaces within the browser environment.

2. Difference from DOM Automation

In the past, agents operating on webpages essentially “looked at the page + guessed the button + simulated a click.” This works, but it is:

- Slow

- Fragile

- Prone to misinterpreting page structure

- Dependent on visual layout and element naming

- Likely to break after a UI update

The Chrome team’s early preview announcement noted that WebMCP provides structured tools, aiming for faster, more stable, and more accurate agent execution. This acknowledges that while DOM actuation is usable, it isn’t “industrial-grade” for the long term.

3. Difference from Browser Extensions

Extensions can add agent capabilities to a page, but they act as an “external takeover.” WebMCP is the opposite: the page itself declares, “I am willing to be invoked in this way.” This shift changes who defines the permission boundaries and product semantics.

Why is Google Pushing This Standard Now?

1. Google Needs a Web-Standard Way for Agents to Connect

The current ecosystem is fragmented: MCP, OpenAPI, private integrations, extensions, and scraping-based automation. None of these truly belong to the Web platform itself. Google doesn’t want the future agent era to treat webpages as objects to be arbitrarily hijacked by external AI platforms.

2. It Aligns with the “AI-in-the-Browser” Strategy

If you look at WebMCP alongside the direction of Chrome and Gemini, it’s clear: the browser wants to be the active coordination layer between the user and the website. WebMCP fills the gap: if there is an agent in the browser, how should the webpage hand over its capabilities?

3. It Aligns with Accessibility and Collaboration

WebMCP frequently mentions assistive technologies. This isn’t just lip service. Many accessibility tools face the same challenges as agents: needing to understand page structure, knowing what is actionable, and completing tasks reliably. By serving both, WebMCP gains much stronger legitimacy in the Web ecosystem.

How Will It Rewrite SEO/GEO?

1. Competition Shifts from “Pages” to “Capabilities”

The basic unit of SEO was the URL. GEO shifted the focus to “paragraphs, viewpoints, and entities.” WebMCP pushes this further: the unit of competition becomes what actionable capabilities the page exposes.

2. GEO Moves from “Citation Optimization” to “Invocability Optimization”

In the future, a brand’s competitiveness in the AI world will have three layers:

- Visibility: Does AI mention you?

- Credibility: Is the semantic context stable when AI cites you?

- Invocability: Can AI complete actions directly using your webpage’s capabilities?

3. The Migration from “Answer Pages” to “Action Pages”

If a webpage can expose capabilities, user journeys will shorten. Instead of a user searching, clicking, finding a feature, and operating it, the agent will find the page and invoke the capability directly. This will compress traditional click-through logic.

How Should SEO Practitioners Prepare?

- Categorize Pages: Distinguish between “Content Assets” (for reading/citing) and “Capability Assets” (for invoking).

- Inject “Task Semantics” into IA: Ensure your buttons, input parameters, and success/failure conditions are structured and clear, not just visually intuitive.

- Upgrade GEO: Don’t just optimize for text; optimize for site capabilities.

How Should PLG WebApps Build AI-Friendly Products?

- Deconstruct Capabilities: Before “connecting an agent,” break your product down into declarable actions.

- Prioritize Shared Context & User Control: In WebApp scenarios, users don’t want black-box agents. Design for “human-in-the-loop” workflows where the agent acts as a co-pilot.

- Design “Agent Onboarding”: Just as you design onboarding for humans, design it for agents. How does an agent discover your capabilities? Where are the permission boundaries?

My Predictions for the Next Two Years

- Not an Overnight Shift: WebMCP is still in the draft/preview stage. Don’t rush to build, but start observing.

- “Invocability” as a New Tier: It will become the next competitive frontier after “Visibility.”

- SEO Roles Will Evolve: SEOs will become a hybrid of “Content + Product + Semantic Systems” experts.

- PLG WebApps Need “AI-Friendly” Refactoring: If your product is “clunky” for an agent to navigate, you will lose out in the agent-first era.

- Understand Before Betting: If you are a content site, focus on “citation eligibility.” If you are a WebApp, start auditing your “exposable actions.”

The takeaway: WebMCP pushes the webpage from an “object to be understood” to a “tool for collaboration.” If this takes root, the next wave of Web competition will happen not just on content pages, but at the layers of action, capability, and collaboration.