Digital Strategy Review | 2026

OpenAI’s $110 Billion Funding and the “Multi-Cloud Alliance”: AI Enters the Era of Super-Capital | Uncle Guo’s AI Daily

By Uncle Guo · Reading Time / 8 Min

Preface

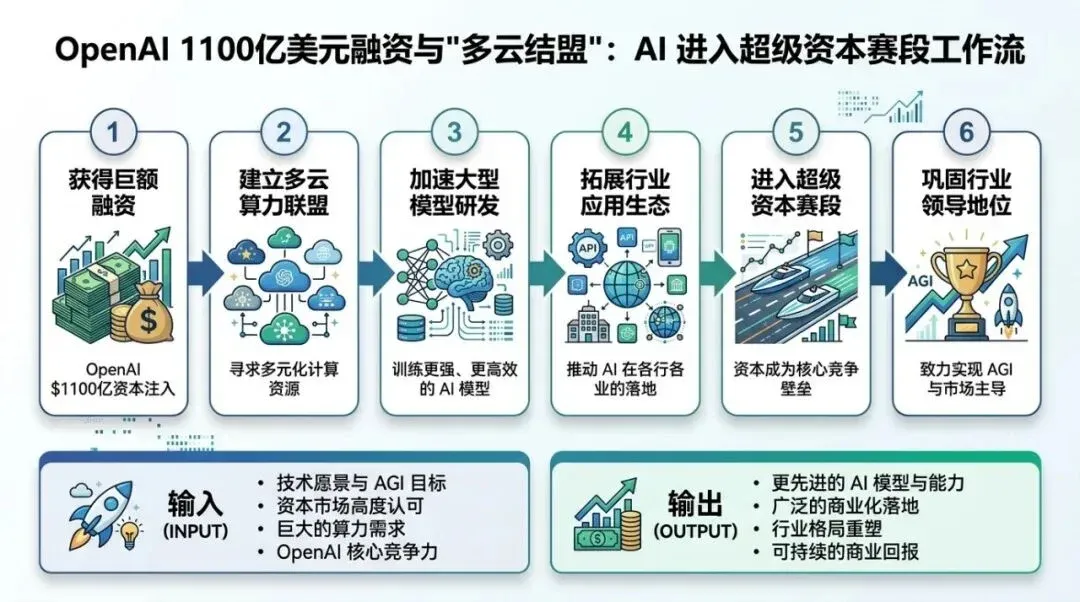

OpenAI’s record-breaking funding round has brought SoftBank, NVIDIA, and Amazon to the same table, while simultaneously confirming continued cooperation with Microsoft. On the surface, it’s about money; in reality, it’s about “industry positioning”: who controls compute supply, who controls the enterprise gateway, and who can push Agents from demos into governable production systems.

01

Today’s Top Headlines

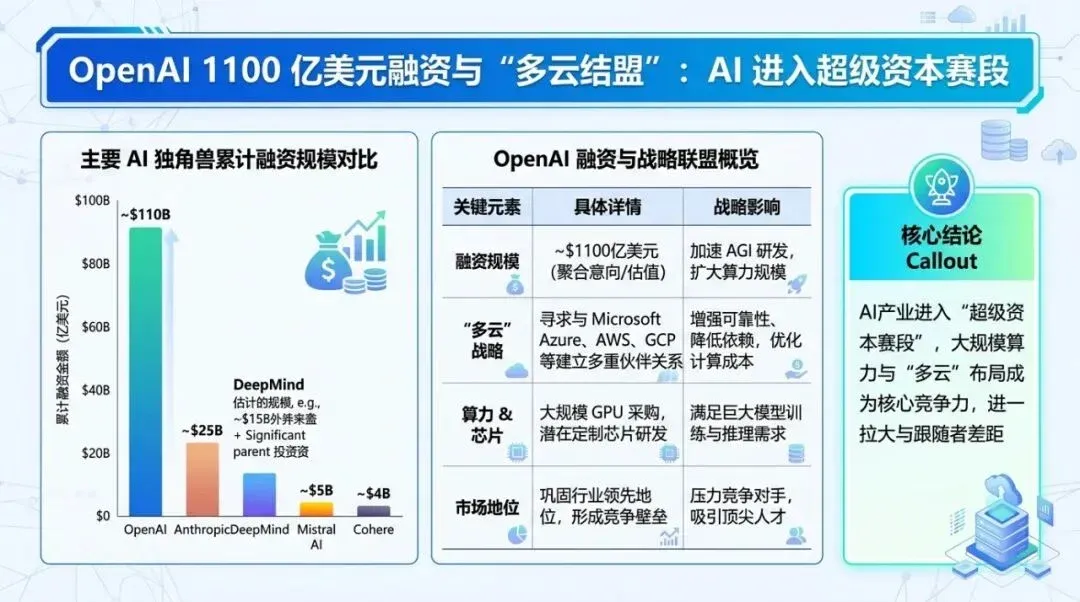

In a nutshell: OpenAI has announced a $110 billion funding round backed by SoftBank, NVIDIA, Amazon, and others. Simultaneously, it is advancing a strategic partnership with Amazon while confirming its ongoing relationship with Microsoft. The AI industry has entered a new phase of “Super-Capital + Super-Infrastructure.”

I prefer to break this news down into three “verifiable facts” rather than emotional interpretations:

-

01 Funding Scale and Valuation Anchor: OpenAI disclosed the funding scale and major investors in its official announcement, providing a pre-money valuation anchor. Official sources:

-

• OpenAI: Scaling AI for everyone (includes investor info and funding narrative) https://openai.com/index/scaling-ai-for-everyone

-

• OpenAI: OpenAI and Amazon announce strategic partnership https://openai.com/index/amazon-partnership

-

• OpenAI: Continuing the Microsoft partnership https://openai.com/index/continuing-microsoft-partnership

-

Simultaneously, multiple mainstream media outlets have independently reported and cross-verified this round (two representative sources listed here):

-

• AP: https://apnews.com/article/openai-funding-nvidia-amazon-softbank-68842e30163b197d6c6ed0e1e8c23eaf

-

• FT: https://www.ft.com/content/bcde7994-32c6-4a1f-a63c-01fb7d9f2c72

-

01 Amazon’s “money” is not a financial investment, but a cloud gateway tie-in: If you view Amazon only as an investor, you miss the critical point: this capital injection comes with platform-level cooperation—integrating OpenAI’s capabilities into “AWS’s enterprise procurement path.” Amazon officially frames this as a strategic partnership rather than a pure investment:

-

• About Amazon (AWS Partnership): https://www.aboutamazon.com/news/aws/openai-aws-strategic-partnership

-

02 Agent infrastructure is shifting from “application-layer hype” to “runtime standard parts”: In the same timeline, Amazon Bedrock launched a stateful runtime for Agents, and OpenAI released corresponding commentary. This means enterprises no longer need to piece together their own “memory/state/isolation/permissions/audit” foundations when building multi-step workflows.

-

• OpenAI (Bedrock Stateful Runtime): https://openai.com/index/introducing-the-stateful-runtime-environment-for-agents-in-amazon-bedrock

-

• AWS Documentation (AgentCore Runtime): https://docs.aws.amazon.com/bedrock/latest/userguide/agentcore-runtime.html

When you stack these three points together, the significance of the headline becomes clear: this isn’t just a story about “OpenAI raising more money.” It is about frontier model companies using capital to lock in compute and enterprise distribution channels, while significantly lowering the barrier to Agent adoption through infrastructure products.

02

Analysis: Why This Matters More

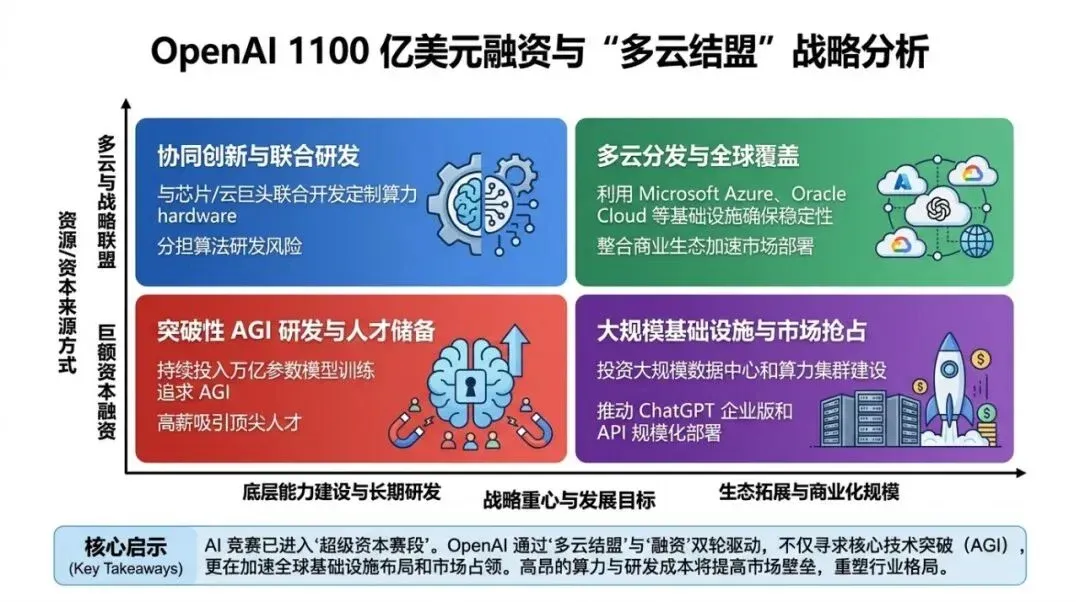

Many people instinctively view the $110 billion through the lens of “bubble/mania,” but I am more concerned with how it rewrites the industry structure in three ways.

The first rewrite: AI competition is upgrading from a “model capability race” to a “capital density race.” While model capability competition persists, once the cost curves for training and inference reach a new magnitude, the true determinants of speed are “who can reliably secure compute, who can reliably secure enterprise budgets, and who can reliably secure global distribution.” The larger the funding, the more it answers a single question: to stay at the table for the next two years, you must first buy a ticket.

The second rewrite: Cloud provider relationships are shifting from “unilateral supply” to “co-ownership + co-distribution.” In the past, you could view it as: Cloud sells compute, model company buys compute. Now, it looks more like: Cloud providers don’t just sell compute; they embed model capabilities into their own product matrices, keeping enterprise customers “naturally” within their ecosystem through procurement, billing, permissions, compliance, and operations. OpenAI deepening ties with AWS while publicly confirming its continued partnership with Microsoft is essentially a more pragmatic strategy:

-

• For OpenAI: Avoid vendor lock-in to a single cloud while maximizing coverage.

-

• For enterprise customers: You will see more scenarios of “the same model, multiple cloud gateways,” increasing bargaining power but also increasing architectural complexity.

The third rewrite: Agent adoption is shifting from “knowing how to code” to “running in a governable runtime.” Over the past year, everyone has talked about Agents—whether they can code, use tools, or handle long tasks. But once in production, the biggest pain point is rarely “can it generate,” but “can it be governed”: how is state saved, how are permissions isolated, how is data logged, how are failures rolled back, and how are costs measured? Bedrock’s stateful runtime and similar foundational capabilities mean Agents are following a familiar path: from “a hundred flowers blooming at the application layer” to “the emergence of standard infrastructure parts,” and finally to “enterprise-scale procurement.”

By the way, there are voices questioning the sustainability of this funding (e.g., Gary Marcus’s commentary). I find such skepticism valuable: it reminds us that beyond the excitement, we must return our focus to business closed-loops and unit economics.

Source: https://garymarcus.substack.com/p/does-openais-new-financing-make-sense

Flowchart used to explain the methodology execution path.

03

Uncle Guo’s Perspective

My judgment on this is clear: in the next 12 months, the watershed for AI will not be “whose model is slightly smarter,” but “who can turn Agents into controllable enterprise productivity systems.” To achieve this, you need to solve three things simultaneously: Gateways, Foundations, and Governance.

One “actionable piece of advice” for three types of people:

-

01 If you are an Enterprise CTO/Architect: Upgrade from “selecting models” to “selecting supply chains.”

-

• Don’t just ask “which one performs best”; ask “which one provides stable supply, stable billing, and stable auditing within your cloud and compliance framework.”

-

• Now is the window for renegotiation: when the same capability can be purchased via multiple cloud gateways, prices and SLAs finally become negotiable.

-

01 If you are building AI products or ToB solutions: Don’t get caught up in “rebuilding another Agent”; focus on “making Agents deliverable.”

-

• The opportunity lies in the runtime ecosystem: evaluation and regression, permission and data boundaries, observability and costs, knowledge updates and versioning.

-

• Your moat may not be a better answer, but rather being “more controllable, more auditable, and more reviewable.”

-

01 If you are a Developer/Team Lead: Treat Agents as colleagues, not as features.

-

• Establish acceptance and rollback mechanisms before talking about speed.

-

• Turn high-frequency tasks into standardized context assets before talking about scaling. Simply put: in the Agent era, process and engineering capabilities will be more valuable than “prompt engineering.”

I will conclude with one sentence: The true meaning of this funding round is that “AI is beginning to be built like the power grid.” The power grid doesn’t win because of a single generator; it wins because of its supply chain, infrastructure, and dispatch systems. AI is the same.

Data chart explaining key comparisons and conclusions.

04

Other Key News Briefs

Amazon Bedrock launches stateful runtime for Agents, lowering the barrier for enterprise Agent adoption

Stateful runtimes turn the most difficult foundational capabilities—“memory/state/secure execution”—into standard parts, accelerating the industrialization of Agents. Source: https://docs.aws.amazon.com/bedrock/latest/userguide/agentcore-runtime.html

Block lays off ~4,000 employees, directly attributing it to AI transformation; the market votes with its stock price

Cases of companies explicitly linking layoffs to AI productivity are increasing; organizational structures will undergo drastic changes sooner than technology. Source: https://www.cnbc.com/2026/02/26/block-laying-off-about-4000-employees-nearly-half-of-its-workforce.html

Anthropic’s refusal of Pentagon request sparks ethical debate: The bottom line is not “something to celebrate,” but “something that should be the norm”

The value here isn’t the gossip, but a reminder to the industry: before large-scale adoption, governance and accountability frameworks must be established first. Source: https://anildash.com/2026/02/27/a-cookie-for-dario/

Apple iPhone/iPad receive NATO restricted-level information processing certification; consumer devices enter higher-security scenarios

As mobile security bars are raised, enterprise AI adoption will rely more on a combination of “on-device capabilities + cloud-side governance.” Source: https://nr.apple.com/Do0I6B8WX0

Google Translate launches “Understand/Ask” interactive translation; translation moves from output to explanation

Language tools are upgrading from “literal converters” to “understanding layers,” which will accelerate benefits in education, cross-border collaboration, and customer service. Source: https://blog.google/products-and-platforms/products/translate/translation-context-ai-update/

Matrix chart used to illustrate applicability boundaries and strategy selection.

05

Trends and Opportunities

Today’s headline reveals three longer trend lines. I suggest using them as your “project list source” for the next quarter:

-

01 The Super-Capital era has begun: Model companies will increasingly resemble heavy-asset infrastructure companies, with deepening ties between compute, energy, talent, and channels.

-

02 Agent foundation standardization: Stateful runtimes, permission isolation, audit logging, and cost metering will move from “in-house R&D” to “standard cloud services.”

-

03 Governance as a product: The most valuable ToB tools of the future may not be models that answer better, but the governance layers that make models “usable, controllable, and accountable” within an enterprise.

For content creators/independent developers, there is also a realistic opportunity: while the giants are busy building the power grid, the market will see a massive demand for the “last mile.” You don’t have to build the generator, but you can build a critical component in the substation.